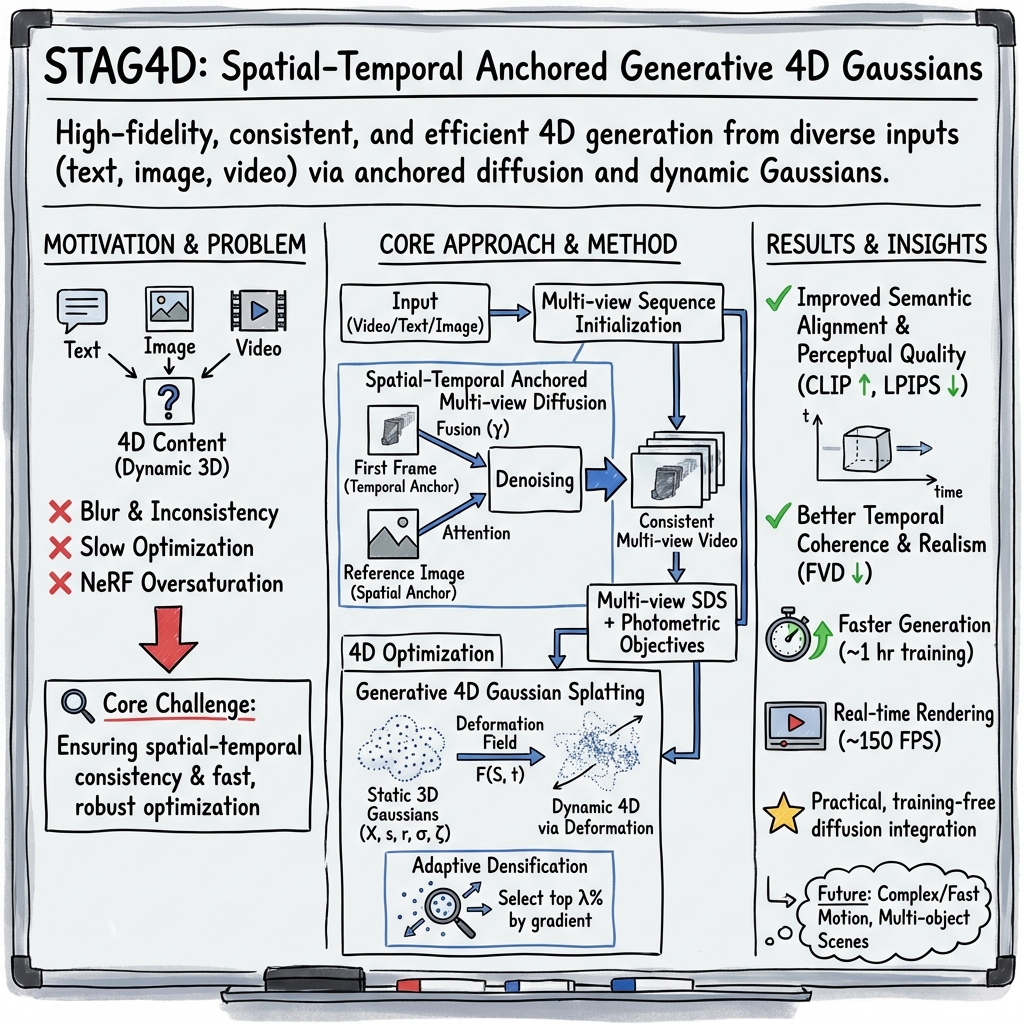

- The paper introduces a novel 4D Gaussian splatting method that leverages spatial-temporal anchors for enhanced content fidelity and dynamic scene representation.

- It employs an adaptive densification strategy and a training-free attention fusion module to streamline optimization and ensure robust multi-view integration.

- Extensive experiments demonstrate STAG4D’s superior performance, setting a new benchmark in 4D content creation with applications in VR, film production, and digital twin technologies.

Spatial-Temporal Anchored Generative 4D Gaussians for High-Fidelity 4D Content Creation

Introduction

The field of 4D content creation, which entails generating dynamic 3D models over time, is rapidly advancing with the continuous development of pre-trained diffusion models and 3D generation techniques. Despite these advancements, the generation of high-fidelity 4D content that maintains spatial-temporal consistency remains a significant challenge. Addressing this, the recent exploration "Spatial-Temporal Anchored Generative 4D Gaussians (STAG4D)" presents a novel framework that synergizes pre-trained diffusion models with dynamic 3D Gaussian splatting. This approach not only aims to elevate the rendering quality and spatial-temporal consistency of 4D content but also enhances generation robustness from diverse inputs such as text, image, and video.

The Methodology

4D Representation and Optimization

The cornerstone of STAG4D is a 4D Gaussian splatting mechanism tailored for the generation task. Building upon 3D Gaussian splatting, STAG4D extends this concept to dynamic scenes using a deformation field that continuously models the scene dynamics, enabling the representation of complex motions and transformations over time. To address the challenge of optimizing these 4D Gaussian points, an adaptive densification strategy is introduced. This approach dynamically adjusts the densification threshold based on the gradient of Gaussian points during the optimization process, ensuring detailed and stable optimization outcomes.

Spatial-Temporal Consistency

Achieving consistency across spatial and temporal dimensions is crucial for realistic 4D content generation. STAG4D introduces a direct fusion approach for attention computation that leverages both spatial and temporal anchors during the multi-view sequence initialization. This strategy not only bolsters the 4D spatial-temporal consistency but also circumvents the need for explicit multi-view or temporal consistency losses, thereby simplifying the optimization process.

Training-Free Attention Fusion Module

A novel aspect of STAG4D is its training-free attention fusion module, which facilitates effective integration of temporal anchor frames into the multi-view diffusion process. This module plays a pivotal role in enhancing the 4D consistency of the generated multi-view videos, significantly improving the quality and realism of the generated 4D content.

Assessment and Implications

Extensive experiments showcase STAG4D's superiority over previous 4D content generation works. Notably, the method achieves a twofold faster generation speed compared to existing video-to-4D approaches and sets a new benchmark in terms of generation quality and robustness. Such achievements underline the effectiveness of the proposed framework and highlight its potential applications in various fields, including virtual reality, film production, and digital twin technologies.

Future Directions

Looking forward, the implications of STAG4D extend beyond the immediate advancements in 4D content creation. The method's ability to generate real-time renderable 4D content from monocular videos opens new avenues for real-world applications, particularly in interactive media and simulation-based training environments. Moreover, the introduction of adaptive densification and the innovative use of a training-free attention fusion module set a foundation for future research in dynamic content generation and 3D model optimization.

As the field of generative AI continues to evolve, the exploration and refinement of techniques for spatial-temporal content generation will remain at the forefront of innovation. The STAG4D framework not only contributes significantly to this ongoing endeavor but also inspires further research into efficient, high-quality dynamic content creation methodologies.