Large language models in 6G security: challenges and opportunities

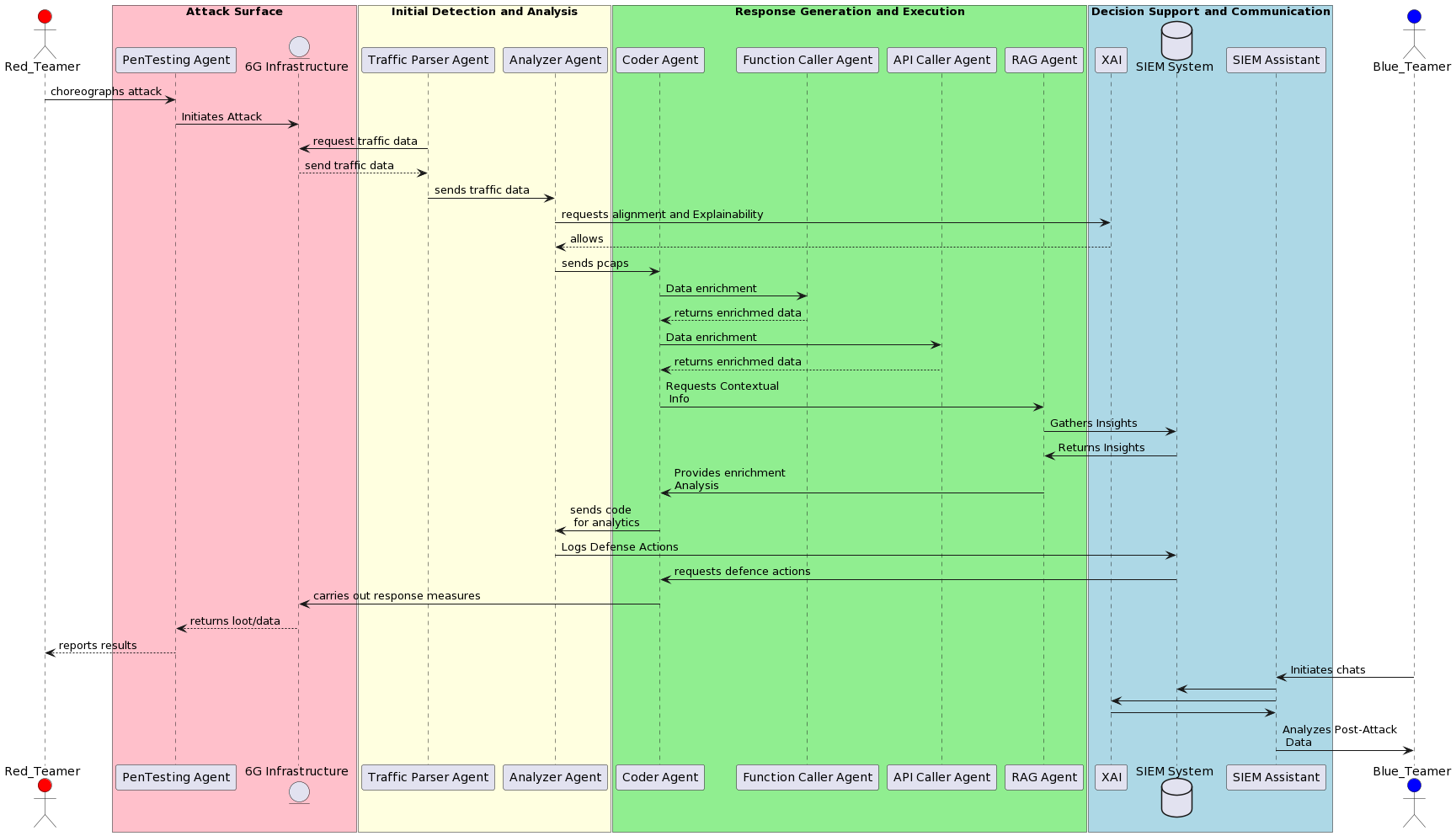

Abstract: The rapid integration of Generative AI (GenAI) and LLMs in sectors such as education and healthcare have marked a significant advancement in technology. However, this growth has also led to a largely unexplored aspect: their security vulnerabilities. As the ecosystem that includes both offline and online models, various tools, browser plugins, and third-party applications continues to expand, it significantly widens the attack surface, thereby escalating the potential for security breaches. These expansions in the 6G and beyond landscape provide new avenues for adversaries to manipulate LLMs for malicious purposes. We focus on the security aspects of LLMs from the viewpoint of potential adversaries. We aim to dissect their objectives and methodologies, providing an in-depth analysis of known security weaknesses. This will include the development of a comprehensive threat taxonomy, categorizing various adversary behaviors. Also, our research will concentrate on how LLMs can be integrated into cybersecurity efforts by defense teams, also known as blue teams. We will explore the potential synergy between LLMs and blockchain technology, and how this combination could lead to the development of next-generation, fully autonomous security solutions. This approach aims to establish a unified cybersecurity strategy across the entire computing continuum, enhancing overall digital security infrastructure.

- OWASP Top 10 for Large Language Model Applications, 2023.

- Not what you’ve signed up for: Compromising real-world llm-integrated applications with indirect prompt injection. In Proceedings of the 16th ACM Workshop on Artificial Intelligence and Security, pages 79–90, 2023.

- A review of generative models in generating synthetic attack data for cybersecurity. Electronics, 13(2), 2024.

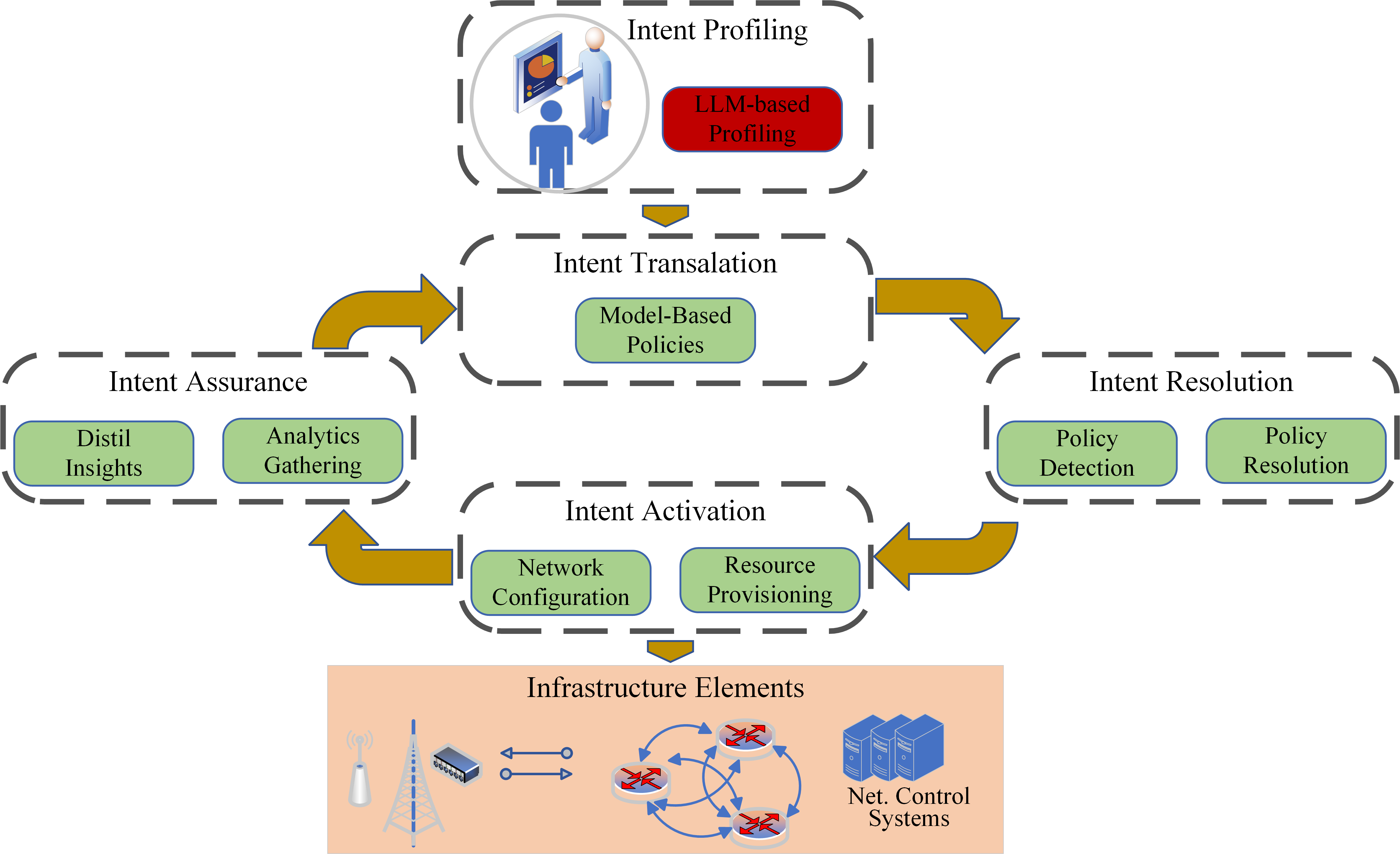

- Security in intent-based networking: Challenges and solutions. Authorea Preprints, 2023.

- Security for 5g and beyond. IEEE Communications Surveys & Tutorials, 21(4):3682–3722, 2019.

- Huntgpt: Integrating machine learning-based anomaly detection and explainable ai with large language models (llms). arXiv preprint arXiv:2309.16021, 2023.

- Leveraging bert’s power to classify ttp from unstructured text. In 2022 Workshop on Communication Networks and Power Systems (WCNPS), pages 1–7. IEEE, 2022.

- Tstem: A cognitive platform for collecting cyber threat intelligence in the wild. arXiv preprint arXiv:2402.09973, 2024.

- Transformer-based llms in cybersecurity: An in-depth study on log anomaly detection and conversational defense mechanisms. In 2023 IEEE International Conference on Big Data (BigData), pages 3590–3599. IEEE, 2023.

- Lamp: Extracting text from gradients with language model priors. Advances in Neural Information Processing Systems, 35:7641–7654, 2022.

- Longformer: The long-document transformer, 2020.

- Telecom ai native systems in the age of generative ai – an engineering perspective, 2023.

- Membership inference attacks from first principles. In 2022 IEEE Symposium on Security and Privacy (SP), pages 1897–1914. IEEE, 2022.

- Extracting training data from large language models. In 30th USENIX Security Symposium (USENIX Security 21), pages 2633–2650, 2021.

- Label-only membership inference attacks. In International conference on machine learning, pages 1964–1974. PMLR, 2021.

- Intent-based networking-concepts and definitions. IRTF draft work-in-progress, 2020.

- Zero touch management: A survey of network automation solutions for 5g and 6g networks. IEEE Communications Surveys & Tutorials, 24(4):2535–2578, 2022.

- Survey on 6g frontiers: Trends, applications, requirements, technologies and future research. IEEE Open Journal of the Communications Society, 2:836–886, 2021.

- End-to-end service monitoring for zero-touch networks. Journal of ICT Standardization, pages 91–112, 2021.

- Privacy side channels in machine learning systems. arXiv preprint arXiv:2309.05610, 2023.

- Jailbreaker: Automated jailbreak across multiple large language model chatbots. arXiv preprint arXiv:2307.08715, 2023.

- Pentestgpt: An llm-empowered automatic penetration testing tool. arXiv preprint arXiv:2308.06782, 2023.

- Bert: Pre-training of deep bidirectional transformers for language understanding, 2019.

- Human-in-the-loop ai reviewing: Feasibility, opportunities, and risks. Journal of the Association for Information Systems, 25(1):98–109, 2024.

- Are diffusion models vulnerable to membership inference attacks?, 2023.

- European Telecommunications Standards Institute. 5G; 5G System; Network Data Analytics Services; Stage 3. Technical Specification TS 129 520 V15.3.0, European Telecommunications Standards Institute (ETSI), April 2019. 3GPP TS 29.520 version 15.3.0 Release 15. [Online]. Available: https://www.etsi.org/deliver/etsi_ts/129500_129599/129520/15.03.00_60/ts_129520v150300p.pdf.

- European Telecommunications Standards Institute. 5G; Procedures for the 5G System (5GS). Technical Specification TS 123 502 V16.7.0, European Telecommunications Standards Institute (ETSI), January 2021. 3GPP TS 23.502 version 16.7.0 Release 16. [Online]. Available: https://www.etsi.org/deliver/etsi_ts/123500_123599/123502/16.07.00_60/ts_123502v160700p.pdf.

- Machine learning-based zero-touch network and service management: A survey. Digital Communications and Networks, 8(2):105–123, 2022.

- On the impact of machine learning randomness on group fairness. FAccT ’23, page 1789–1800, New York, NY, USA, 2023. Association for Computing Machinery.

- Gradientcoin: A peer-to-peer decentralized large language models, 2023.

- Network automation and data analytics in 3gpp 5g systems. IEEE Network, 2023.

- Yuanhao Gong. Dynamic large language models on blockchains, 2023.

- Logbert: Log anomaly detection via bert. In 2021 international joint conference on neural networks (IJCNN), pages 1–8. IEEE, 2021.

- From chatgpt to threatgpt: Impact of generative ai in cybersecurity and privacy. IEEE Access, 2023.

- Llm multi-agent systems: Challenges and open problems, 2024.

- A fast, performant, secure distributed training framework for large language model, 2024.

- Sleeper agents: Training deceptive llms that persist through safety training. arXiv preprint arXiv:2401.05566, 2024.

- Achieving model robustness through discrete adversarial training, 2021.

- Prada: protecting against dnn model stealing attacks. In 2019 IEEE European Symposium on Security and Privacy (EuroS&P), pages 512–527. IEEE, 2019.

- Cyber sentinel: Exploring conversational agents in streamlining security tasks with gpt-4. arXiv preprint arXiv:2309.16422, 2023.

- User inference attacks on large language models. arXiv preprint arXiv:2310.09266, 2023.

- Maze: Data-free model stealing attack using zeroth-order gradient estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 13814–13823, 2021.

- Pac-gpt: A novel approach to generating synthetic network traffic with gpt-3. IEEE Access, 2023.

- On the reliability of watermarks for large language models, 2023.

- Gender bias and stereotypes in large language models. In Proceedings of The ACM Collective Intelligence Conference, pages 12–24, 2023.

- Federatedscope-llm: A comprehensive package for fine-tuning large language models in federated learning, 2023.

- Albert: A lite bert for self-supervised learning of language representations, 2020.

- Log parsing with prompt-based few-shot learning. arXiv preprint arXiv:2302.07435, 2023.

- A survey on intent-based networking. IEEE Communications Surveys & Tutorials, 25(1):625–655, 2023.

- Chatgpt as an attack tool: Stealthy textual backdoor attack via blackbox generative model trigger. arXiv preprint arXiv:2304.14475, 2023.

- Text adversarial purification as defense against adversarial attacks, 2023.

- Large language models can be strong differentially private learners, 2022.

- Demystifying rce vulnerabilities in llm-integrated apps. arXiv preprint arXiv:2309.02926, 2023.

- Prompt injection attack against llm-integrated applications. arXiv preprint arXiv:2306.05499, 2023.

- Prompt injection attacks and defenses in llm-integrated applications. arXiv preprint arXiv:2310.12815, 2023.

- A survey on zero touch network and service management (zsm) for 5g and beyond networks. Journal of Network and Computer Applications, 203:103362, 2022.

- Bc4llm: Trusted artificial intelligence when blockchain meets large language models, 2023.

- Autonomous federated learning for distributed intrusion detection systems in public networks. IEEE Access, 11:121325–121339, 2023.

- How hardened is your hardware? guiding chatgpt to generate secure hardware resistant to cwes. In International Symposium on Cyber Security, Cryptology, and Machine Learning, pages 320–336. Springer, 2023.

- Automatically detecting expensive prompts and configuring firewall rules to mitigate denial of service attacks on large language models. 2024.

- National Institute of Standards and Technology. Nist cybersecurity framework. https://www.nist.gov/cyberframework, 2024. Accessed: 2024-02-20.

- Probing toxic content in large pre-trained language models. pages 4262–4274, Online, August 2021. Association for Computational Linguistics.

- Training language models to follow instructions with human feedback. In S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh, editors, Advances in Neural Information Processing Systems, volume 35, pages 27730–27744. Curran Associates, Inc., 2022.

- Pac-GPT. Network Packet Generator. https://github.com/dark-0ne/NetworkPacketGenerator, 2024.

- PentestGPT. A gpt-empowered penetration testing tool. https://github.com/GreyDGL/PentestGPT, 2023. Accessed: 2024-02-16.

- Llm self defense: By self examination, llms know they are being tricked, 2023.

- Synergistic integration of large language models and cognitive architectures for robust ai: An exploratory analysis. In Proceedings of the AAAI Symposium Series, volume 2, pages 396–405, 2023.

- Distilbert, a distilled version of bert: smaller, faster, cheaper and lighter, 2020.

- You autocomplete me: Poisoning vulnerabilities in neural code completion. In 30th USENIX Security Symposium (USENIX Security 21), pages 1559–1575, 2021.

- Gpt-2c: A parser for honeypot logs using large pre-trained language models. In Proceedings of the 2021 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining, pages 649–653, 2021.

- Prompt-specific poisoning attacks on text-to-image generative models. arXiv preprint arXiv:2310.13828, 2023.

- A novel anomaly-based intrusion detection model using psogwo-optimized bp neural network and ga-based feature selection. Sensors, 22(23):9318, 2022.

- Cyber threat hunting using unsupervised federated learning and adversary emulation. In 2023 IEEE International Conference on Cyber Security and Resilience (CSR), pages 315–320. IEEE, 2023.

- Ddos attack detection using unsupervised federated learning for 5g networks and beyond. In 2023 Joint European Conference on Networks and Communications & 6G Summit (EuCNC/6G Summit), pages 442–447. IEEE, 2023.

- Membership inference attacks against machine learning models. In 2017 IEEE symposium on security and privacy (SP), pages 3–18. IEEE, 2017.

- Information leakage in embedding models. In Proceedings of the 2020 ACM SIGSAC conference on computer and communications security, pages 377–390, 2020.

- Beyond memorization: Violating privacy via inference with large language models. arXiv preprint arXiv:2310.07298, 2023.

- Fake news detectors are biased against texts generated by large language models. arXiv preprint arXiv:2309.08674, 2023.

- Towards evaluation and understanding of large language models for cyber operation automation. In 2023 IEEE Conference on Communications and Network Security (CNS), pages 1–6. IEEE, 2023.

- Defending against backdoor attacks in natural language generation. Proceedings of the AAAI Conference on Artificial Intelligence, 37(4):5257–5265, Jun. 2023.

- You reap what you sow: On the challenges of bias evaluation under multilingual settings. In Proceedings of BigScience Episode# 5–Workshop on Challenges & Perspectives in Creating Large Language Models, pages 26–41, 2022.

- 6g system architecture: A service of services vision. ITU journal on future and evolving technologies, 3(3):710–743, 2022.

- Fusionai: Decentralized training and deploying llms with massive consumer-level gpus, 2023.

- Ai-native interconnect framework for integration of large language model technologies in 6g systems. arXiv preprint arXiv:2311.05842, 2023.

- Breaking bad: Unraveling influences and risks of user inputs to chatgpt for game story generation. In International Conference on Interactive Digital Storytelling, pages 285–296. Springer, 2023.

- Data-free model extraction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 4771–4780, June 2021.

- How prevalent is gender bias in chatgpt?–exploring german and english chatgpt responses. arXiv preprint arXiv:2310.03031, 2023.

- The silence of the llms: Cross-lingual analysis of political bias and false information prevalence in chatgpt, google bard, and bing chat. 2023.

- You see what i want you to see: poisoning vulnerabilities in neural code search. In Proceedings of the 30th ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, pages 1233–1245, 2022.

- Jailbreak and guard aligned language models with only few in-context demonstrations, 2023.

- Defending pre-trained language models as few-shot learners against backdoor attacks. Advances in Neural Information Processing Systems, 36, 2024.

- Can llms express their uncertainty? an empirical evaluation of confidence elicitation in llms, 2023.

- Backdooring instruction-tuned large language models with virtual prompt injection, 2023.

- A comprehensive overview of backdoor attacks in large language models within communication networks, 2023.

- Poisonprompt: Backdoor attack on prompt-based large language models. arXiv preprint arXiv:2310.12439, 2023.

- A survey on large language model (llm) security and privacy: The good, the bad, and the ugly, 2023.

- Towards improving adversarial training of nlp models, 2021.

- Rrhf: Rank responses to align language models with human feedback without tears, 2023.

- Building trust in conversational ai: A comprehensive review and solution architecture for explainable, privacy-aware systems using llms and knowledge graph, 2023.

- Towards building the federated gpt: Federated instruction tuning, 2024.

- Prompts should not be seen as secrets: Systematically measuring prompt extraction attack success, 2023.

- Promptbench: Towards evaluating the robustness of large language models on adversarial prompts, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.