Vision-Based Human Pose Estimation via Deep Learning: A Survey

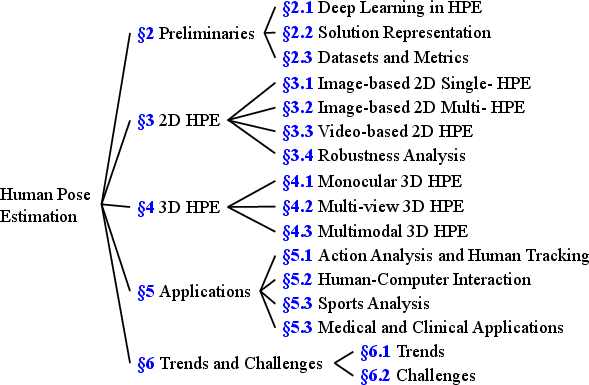

Abstract: Human pose estimation (HPE) has attracted a significant amount of attention from the computer vision community in the past decades. Moreover, HPE has been applied to various domains, such as human-computer interaction, sports analysis, and human tracking via images and videos. Recently, deep learning-based approaches have shown state-of-the-art performance in HPE-based applications. Although deep learning-based approaches have achieved remarkable performance in HPE, a comprehensive review of deep learning-based HPE methods remains lacking in the literature. In this article, we provide an up-to-date and in-depth overview of the deep learning approaches in vision-based HPE. We summarize these methods of 2-D and 3-D HPE, and their applications, discuss the challenges and the research trends through bibliometrics, and provide insightful recommendations for future research. This article provides a meaningful overview as introductory material for beginners to deep learning-based HPE, as well as supplementary material for advanced researchers.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple explanation of “Vision‑based Human Pose Estimation via Deep Learning: A Survey”

What this paper is about

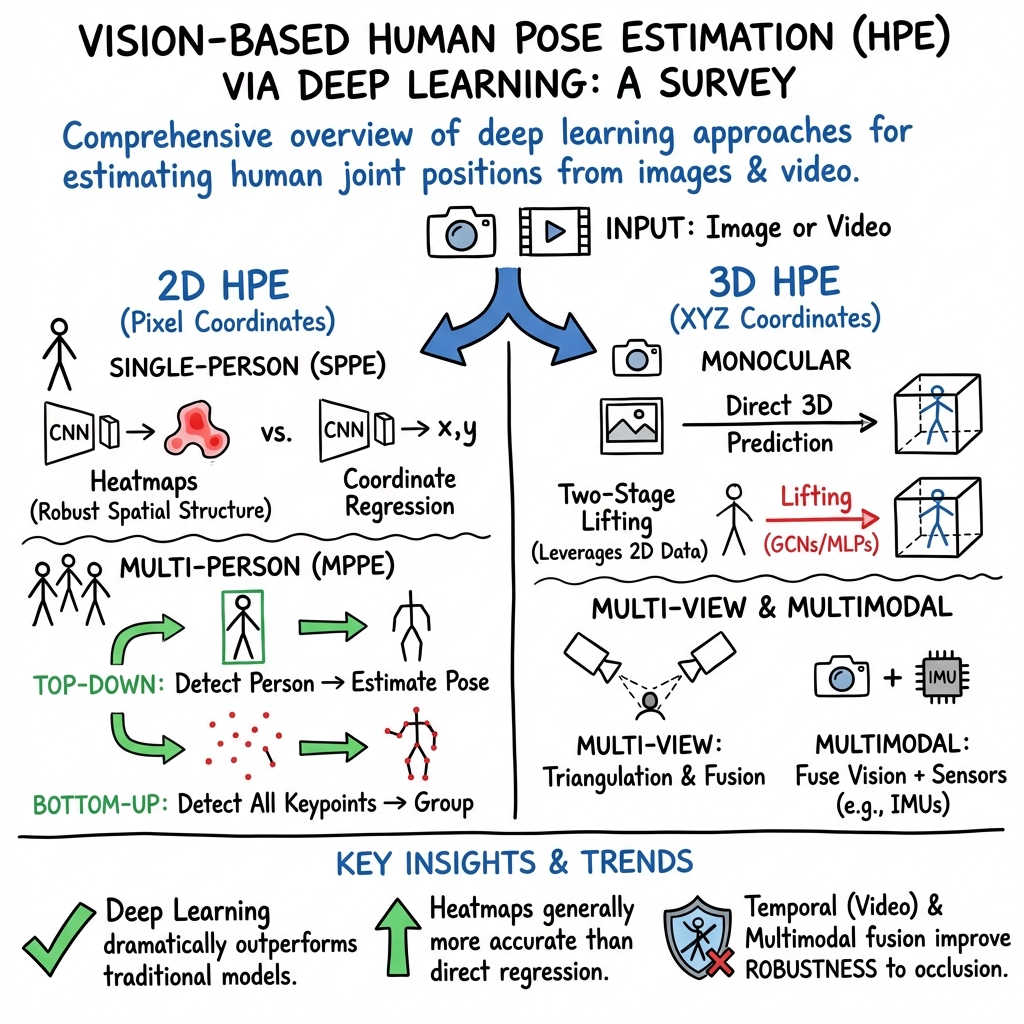

This paper is a big “overview tour” of how computers learn to find the positions of people’s body joints (like shoulders, elbows, knees, and ankles) in photos and videos. That task is called human pose estimation. The authors focus on methods that use deep learning and cover both 2D poses (on a flat image) and 3D poses (in real space). They also talk about where these methods are used, which datasets and scores are used to test them, what’s hard about the problem, and where the field is headed.

What questions the paper tries to answer

In friendly terms, the paper asks:

- How do modern deep learning methods figure out where body joints are in images and videos?

- What are the main types of approaches for 2D and 3D pose estimation, and how do they differ?

- Which datasets and “scoreboards” are commonly used to compare methods?

- What are the biggest challenges (like crowded scenes or motion blur), and what trends are researchers exploring next?

- Based on many published papers (bibliometrics), what directions seem most promising for the future?

How the researchers approached it

This is a survey paper, so instead of running a single new experiment, the authors:

- Collected and organized many recent studies on pose estimation.

- Explained the main ideas behind the models in simple building blocks.

- Compared approaches using common datasets and evaluation measures.

- Used bibliometrics (basically counting and analyzing publications) to spot research trends over time.

To make the technical parts easier to picture:

- Convolutional Neural Networks (CNNs): Think of these as “smart filters” that scan an image and learn to spot patterns like edges, textures, or body parts.

- Recurrent Neural Networks (RNNs): These are good at sequences. For videos, they use information from previous frames to help understand the current frame—like remembering where a moving elbow was a moment ago.

- Graph Convolutional Networks (GCNs): A human skeleton looks like a network of connected points (joints). GCNs work directly on that “joint graph” to learn how joints relate to each other, which helps clean up or “lift” poses from 2D to 3D.

- Heatmaps: Instead of guessing a joint’s exact x–y position directly, many methods create a “heatmap,” like a glowing weather map that shows where a joint is most likely to be. The brightest spot is the predicted location.

- Top‑down vs. bottom‑up (for many people in one image):

- Top‑down: First find each person with a person detector (draw a box around each person), then find their joints inside that box.

- Bottom‑up: First find all joints in the whole image, then group those joints into individuals.

- 2D vs. 3D pose:

- 2D is locating joints on the picture plane.

- 3D adds depth, estimating where joints are in real space. Sometimes this uses multiple cameras or combines video with motion sensors (IMUs).

They also explain common test data and scores:

- Datasets: COCO and MPII for 2D, Human3.6M and CMU Panoptic for 3D, among others.

- Scores:

- PCK: “Percentage of Correct Keypoints” (how many joints you got right).

- mAP/OKS (from COCO): A similarity score that rewards accuracy and visibility, averaged in a standard way.

- MPJPE for 3D: “Mean Per Joint Position Error”—the average distance (like centimeters) between predicted and true joint locations.

What the main takeaways are and why they matter

Here are the key ideas the survey highlights and why they’re important:

- Deep learning dominates pose estimation:

- CNN‑based methods, often with special backbones like Hourglass, CPN, and HRNet, greatly improved accuracy compared to older, handcrafted methods.

- Heatmaps usually beat direct coordinate prediction for finding precise joint locations.

- Two main strategies for multi‑person 2D pose:

- Top‑down is often more accurate for each person but slows down when many people are in the image (because it processes one person at a time).

- Bottom‑up is faster and more stable in crowded scenes, because it detects all joints at once and then groups them.

- Videos can help:

- Using time information (RNNs or similar) makes pose tracking more stable under motion blur, defocus, or brief occlusions.

- 3D pose estimation is harder but progressing:

- Monocular 3D (from a single camera) is tough due to depth ambiguity. A common and strong recipe is “two‑stage”: first do 2D pose, then “lift” to 3D using a specialized network (often with GCNs).

- Multi‑view (several cameras) or combining cameras with body sensors (IMUs) can improve 3D accuracy a lot.

- Many high‑quality 3D datasets are recorded indoors; getting outdoor, real‑world 3D data is still challenging.

- Robustness and reliability:

- Methods that use heatmaps tend to be more robust to tiny pixel changes than direct regression methods.

- Some joints (like head/neck) are easier to detect reliably than others (like hips/ankles).

- Trends spotted through bibliometrics and recent work:

- Transformers are becoming popular backbones for pose estimation, capturing long‑range relationships in images.

- GCNs are widely used for modeling the skeleton’s structure and for 2D‑to‑3D lifting.

- Semi‑supervised and self‑supervised learning are promising, especially for videos where labeling every frame is expensive.

- Efficient, real‑time models for phones and edge devices are gaining attention.

These insights matter because they guide developers and researchers toward methods that are accurate, fast, and practical for the real world.

What this could lead to in the real world

Better pose estimation can improve:

- Sports and fitness coaching apps that give instant feedback on form.

- Video games and virtual reality that track your movements without special suits.

- Human‑computer interaction, like gesture controls.

- Healthcare and rehabilitation, by monitoring posture and movement safely.

- Animation and movie effects, making motion capture cheaper and more portable.

The paper suggests that future work should focus on:

- Handling crowded scenes and occlusions even better.

- Making models faster and lighter for phones and low‑power devices.

- Improving robustness to real‑world changes (lighting, motion blur) and adversarial tricks.

- Getting more and better 3D data in natural, outdoor settings.

- Smarter ways to learn from unlabeled or sparsely labeled videos.

In short, this survey maps the field, shows what works best today, and points to where pose estimation is heading next—toward more accurate, robust, and accessible systems that work in everyday life.

Knowledge Gaps

Below is a single, concise list of concrete knowledge gaps, limitations, and open questions identified from the paper; each item highlights what remains missing or underexplored and can guide actionable future research.

- Transformer-based HPE: lack of a systematic, controlled comparison of CNN vs. ViT/transformer models across SPPE/MPPE, 2D/3D, including data scaling laws, training recipes, robustness, and compute–accuracy trade-offs.

- Heatmap vs. regression representations: missing quantification of heatmap discretization/quantization error (e.g., effect of output stride), calibration of predicted confidences, and unified continuous/distributional representations that scale cleanly to 3D.

- Multi-person heatmap encoding: max-aggregation for multi-person heatmaps discards multiplicity under overlap; alternative encodings that preserve per-instance modes without brittle post-hoc grouping remain underexplored.

- End-to-end grouping in bottom-up MPPE: absence of differentiable, robust, and efficient joint-to-instance association pipelines that remain stable under heavy crowding, severe occlusions, and small person scales.

- Monocular multi-person 3D HPE: limited coverage of bottom-up, real-time 3D joint detection and instance grouping from a single camera under crowding, with explicit occlusion/depth-order reasoning and identity persistence.

- Temporal evaluation: no standardized temporal metrics (e.g., jitter, temporal smoothness, ID switches for pose tracking) and benchmarks for video-based HPE beyond per-frame accuracy.

- Semi-/self-supervised video learning: insufficient exploration of large-scale unlabeled video pretraining (e.g., motion/geometry constraints, cycle consistency) to reduce annotation needs for 2D and 3D HPE.

- Cross-dataset generalization: missing systematic studies on train–test transfer (e.g., COCO→CrowdPose, Human3.6M→3DPW), robust domain adaptation strategies, and clear protocols for reporting generalization gaps.

- Outdoor and in-the-wild 3D datasets: scarcity of large-scale, diverse (multi-person, occlusions, activities, demographics) outdoor 3D ground truth; need for scalable capture methods and benchmarks with consistent joint definitions.

- 3D robustness: lack of benchmarks and analyses for 3D HPE under common corruptions (blur, noise), occlusions, viewpoint shifts, and adversarial perturbations; certified robustness and principled defenses remain open.

- Metrics and protocols: reliance on PCK/OKS and MPJPE without occlusion-aware scoring, bone-length/angle errors, kinematic-plausibility constraints, or clear alignment protocols (e.g., PA-MPJPE vs. root-relative) across studies.

- Uncertainty quantification: limited treatment of calibrated per-joint uncertainty (aleatoric/epistemic) and how to propagate it to downstream decisions, tracking, or sensor fusion.

- Efficiency and deployment: insufficient benchmarking under realistic latency/throughput/energy constraints on edge and mobile devices, and missing standardized reporting of FLOPs, memory, and speed across methods.

- Multi-modal fusion beyond IMUs: opportunities with depth, event cameras, radar/RF/WiFi remain underexplored; open issues include synchronization, self-calibration, privacy, and learning under partial sensor failure.

- Camera calibration assumptions: many multi-view methods presume known intrinsics/extrinsics; self-calibrating HPE under unknown/Changing camera parameters and minimal calibration is largely open.

- Parametric body models: integration of keypoint-based HPE with mesh/SMPL estimation for consistent 2D–3D reasoning, and unified evaluation of keypoint vs. mesh quality in the wild is not addressed.

- Pose tracking and identity: limited discussion of joint estimation–tracking integration for consistent per-person trajectories over long videos with re-identification after occlusions.

- Crowd and occlusion reasoning: need for explicit occluder modeling, depth ordering, and synthetic occlusion augmentation strategies that demonstrably improve performance in dense scenes.

- Graph design for GCNs: absence of adaptive/dynamic skeleton graphs that reflect pose-dependent connectivity, enforce kinematic constraints, or embed physics priors in a learnable, data-efficient way.

- Annotation noise and label quality: impact of noisy/inconsistent labels across datasets and robust training or relabeling strategies (e.g., consensus labeling, teacher–student refinement) are not analyzed.

- Fairness and bias: no assessment of performance disparities across demographics, body shapes, clothing, age groups, or mobility aids, nor guidance on bias-aware metrics and dataset curation.

- Reproducibility and reporting: tables inconsistently report parameters/FLOPs and omit standardized runtime metrics; a community reporting standard for HPE is needed.

- Absolute scale in monocular 3D: strategies for recovering metric 3D scale without known camera or scene scale (e.g., ground contact, biomechanical priors) are not reviewed.

- Downstream impact: how pose errors propagate to action recognition, biomechanics, human–robot interaction, and safety-critical applications; task-aware training and evaluation remain underexplored.

- Adversarial defenses: while vulnerabilities are noted for 2D HPE, systematic evaluations of defense mechanisms (training schemes, augmentations, certified bounds) and their trade-offs are missing.

- Real-world imaging conditions: limited guidance on handling fisheye/360° lenses, rolling shutter, motion blur, low light, strong backlight, and egocentric views; domain adaptation strategies are needed.

- Long-tailed and rare poses: data and methods for extreme or rare poses (e.g., acrobatics, assistive devices) and curriculum or compositional augmentation to improve coverage are not discussed.

- Continual/online learning: absence of methods for on-device adaptation to new scenes/cameras without catastrophic forgetting and with limited labels or compute.

Practical Applications

Immediate Applications

The following applications can be deployed now by leveraging current 2D/3D vision-based human pose estimation (HPE) capabilities, datasets, and open-source/tooling ecosystems summarized in the paper.

- Smart fitness and posture coaching on mobile

- Sectors: Consumer health, wellness, sports tech

- Enabled by: Real-time 2D SPPE (e.g., BlazePose via MediaPipe), robust heatmap-based backbones (HRNet, SimpleBaseline), video-based temporal smoothing

- Tools/workflows: On-device pose inference; rep counting, range-of-motion and posture scoring workflows; feedback overlays

- Assumptions/dependencies: Good lighting and camera placement; mobile GPU/NPUs for real-time; non-crowded scenes; accuracy tolerance suitable for consumer feedback

- Broadcast and club-level sports analytics

- Sectors: Sports, media, coaching

- Enabled by: Top-down multi-person 2D HPE (e.g., HRNet/CPN + Faster R-CNN), PoseTrack-style tracking, 2D→3D lifting for constrained motions

- Tools/workflows: Highlight detection, tactic boards, biomechanical proxies (joint angles, cadence), event tagging

- Assumptions/dependencies: Fixed cameras with known viewpoints; moderate occlusion; latency budgets that accommodate top-down scaling w/ player count

- Retail queuing and staff allocation analytics without facial ID

- Sectors: Retail, operations

- Enabled by: Bottom-up 2D MPPE (OpenPose-style, HigherHRNet) robust in crowded scenes; OKS/mAP-based QA

- Tools/workflows: Queue length and wait-time estimation using skeleton counts and trajectories; heatmap zones-of-interest

- Assumptions/dependencies: Privacy policies (no identity); edge inference preferred; camera placement minimizing extreme occlusions

- Workplace ergonomics and musculoskeletal risk screening

- Sectors: Manufacturing, logistics, construction, occupational health

- Enabled by: 2D MPPE with action analysis; video-based temporal consistency; GCN-based pose refinement for joint associations

- Tools/workflows: RULA/REBA-like scoring from extracted joint angles; dashboards for safety teams; alerting for unsafe postures

- Assumptions/dependencies: Calibrated camera coverage of work areas; union/privacy agreements; acceptance of non-medical accuracy

- Gesture-based human-computer interaction (HCI)

- Sectors: Software, gaming, XR, smart home

- Enabled by: 2D SPPE + hand/upper-body keypoints; transformer-enhanced backbones (TransPose, ViTPose) for precision gestures

- Tools/workflows: Gesture recognizer pipelines on top of keypoints; latency-optimized runtimes (MediaPipe graph)

- Assumptions/dependencies: Controlled backgrounds; consistent framing; user onboarding for gesture sets

- Video indexing and content moderation

- Sectors: Media platforms, security ops, edtech

- Enabled by: Pose-driven action recognition on top of 2D HPE; video-based temporal models (LSTM, PoseWarper)

- Tools/workflows: Action tags (e.g., fighting, falling, running); moderation queues; educational content chaptering

- Assumptions/dependencies: Acceptable false-positive/negative rates; domain-specific training; policy thresholds

- Real-time crowd analytics for events

- Sectors: Venue management, public safety

- Enabled by: Bottom-up 2D MPPE (HigherHRNet, associative embedding) more robust in crowds; COCO/CrowdPose-tuned models

- Tools/workflows: Crowd flow maps from skeleton trajectories; pinch-point detection; occupancy estimation

- Assumptions/dependencies: Edge or on-prem compute; policy constraints for public surveillance; signage/camera placement

- Animation and virtual production previsualization

- Sectors: Entertainment, game dev, VFX

- Enabled by: 2D→3D lifting (GCN-based) from single-view videos; monocular 3D heatmap methods for coarse 3D; retargeting pipelines

- Tools/workflows: Live previz of actors; quick blocking without mocap suits; integration with Unreal/Unity

- Assumptions/dependencies: Not clinical-grade; limited occlusions; style-specific calibration for retargeting

- Driver and operator monitoring

- Sectors: Automotive, heavy machinery, transportation

- Enabled by: Upper-body 2D HPE; temporal models for drowsiness/inattention via head/shoulder/arm pose

- Tools/workflows: In-cabin cameras; on-edge inference; alerting systems

- Assumptions/dependencies: IR/night-vision support; strict privacy-by-design; embedded compute constraints

- Tele-rehabilitation readiness checks (non-diagnostic)

- Sectors: Telehealth, physical therapy (screening/engagement)

- Enabled by: 2D HPE with temporal smoothing; interpretable joint-angle features and exercise form analysis

- Tools/workflows: Clinician portals; patient guidance overlays; progress tracking

- Assumptions/dependencies: Not a medical device unless validated; clinically acceptable error margins; home environment variability

- Academic benchmarking and dataset curation workflows

- Sectors: Academia, AI R&D

- Enabled by: Survey’s taxonomy (SPPE/MPPE, 2D/3D, monocular/multiview), metrics (PCK, OKS/mAP, MPJPE), bibliometrics

- Tools/workflows: Semi-supervised video labeling (PoseWarper), robustness test suites (COCO-C/MPII-C/OCHuman-C), reproducible pipelines with open-source (OpenPose, AlphaPose, BlazePose)

- Assumptions/dependencies: Licensing for datasets; standardized evaluation and data splits; compute for large-scale training

- Policy pilots for privacy-preserving analytics

- Sectors: Public policy, compliance, legal

- Enabled by: Skeleton-only processing (no faces/IDs), on-device/edge inference, standard metrics to set performance thresholds

- Tools/workflows: DPIA templates; procurement specs requiring edge processing, minimum OKS/mAP; data retention and anonymization rules

- Assumptions/dependencies: Legal alignment with local privacy laws; stakeholder engagement; third-party audits

Long-Term Applications

The following applications require further research, robust generalization, scaling, or infrastructure investment (e.g., outdoor 3D accuracy, occlusion robustness, certification).

- Clinical-grade 3D biomechanics and gait analysis at scale

- Sectors: Healthcare, medical devices, eldercare

- Enabled by: Multiview 3D HPE (learnable triangulation), vision+IMU fusion (Video Inertial Poser, DeepFuse), low-MPJPE monocular methods for outdoors

- Tools/workflows: Clinic-in-a-box systems; standardized 3D joint kinematics; EHR integration

- Assumptions/dependencies: Regulatory validation; bias/robustness across demographics; calibrated setups or reliable sensor fusion

- Full-body XR avatars in-the-wild without external trackers

- Sectors: XR/Metaverse, gaming, social

- Enabled by: Monocular 3D HPE with temporal/GCN lifting; transformer backbones; vision+IMU fusion for feet/hip stability

- Tools/workflows: Low-latency streaming to game engines; personalization and retargeting; occlusion-aware body completion

- Assumptions/dependencies: Sub-30 ms end-to-end latency; occlusion handling; battery-efficient wearables

- Human–robot collaboration with dynamic safety envelopes

- Sectors: Robotics, industrial automation

- Enabled by: Bottom-up MPPE for crowded floors; predictive pose forecasting; robustness analysis against adversarial and sensor noise

- Tools/workflows: Real-time perception on edge GPUs; dynamic geofencing around human skeletons; risk scoring

- Assumptions/dependencies: Deterministic latency; safety certification; domain-adapted robustness benchmarks

- City-scale crowd behavior and evacuation modeling

- Sectors: Smart cities, emergency response, transportation

- Enabled by: Distributed bottom-up HPE at camera nodes; multi-camera association; privacy-preserving skeleton analytics

- Tools/workflows: Federated analytics; zoning heatmaps; simulation calibration from real pose dynamics

- Assumptions/dependencies: Public acceptance and legal frameworks; resilient edge/cloud orchestration; camera network calibration

- Automated safety compliance monitoring (PPE, posture, proximity)

- Sectors: Construction, energy, manufacturing

- Enabled by: MPPE + action recognition; 3D scene understanding for height/proximity; robustness under occlusions and weather

- Tools/workflows: Compliance dashboards; incident replay; integration with site management tools

- Assumptions/dependencies: Harsh environment robustness; union agreements; low false-alarm rates

- Personalized rehab/therapy with digital twins

- Sectors: Healthcare, wellness

- Enabled by: Accurate 3D HPE with individualized models; temporal error correction and biomechanical constraints

- Tools/workflows: Patient-specific digital twins; adaptive exercise prescriptions; clinician-in-the-loop

- Assumptions/dependencies: Longitudinal calibration; validated clinical outcomes; privacy-preserving storage

- In-the-wild multi-person 3D HPE for sports and events

- Sectors: Sports, media, analytics

- Enabled by: Multiview rigs + learnable triangulation; monocular 3D with scene priors; cross-view fusion networks

- Tools/workflows: Real-time 3D tracking of teams; tactical 3D reconstructions; rule-based event detection

- Assumptions/dependencies: Multi-camera synchronization; field-wide coverage; compute at venue

- Standardized robustness, fairness, and safety certification for HPE

- Sectors: Policy, standards bodies, enterprise procurement

- Enabled by: Robustness datasets (COCO-C, MPII-C, OCHuman-C), adversarial testing for pose, cross-demographic performance reporting

- Tools/workflows: Certification suites, model cards with PCK/OKS/MPJPE across subgroups and conditions

- Assumptions/dependencies: Community consensus on protocols; third-party labs; access to diverse data

- Synthetic-to-real domain adaptation pipelines

- Sectors: Software tools, robotics, AV, sports

- Enabled by: Large-scale simulated datasets (e.g., JTA) and self/semi-supervised video learning across domains

- Tools/workflows: Curriculum learning from sim to real; photorealistic augmentation; pose-consistency constraints

- Assumptions/dependencies: Bridging appearance and motion gaps; licensing for synthetic assets; compute for large pretraining

- On-device federated pose learning with privacy guarantees

- Sectors: Consumer devices, smart home, mobile

- Enabled by: Lightweight transformer/CNN backbones; federated learning and differential privacy; on-device quantization/pruning

- Tools/workflows: Periodic federated updates; edge model monitors; per-device personalization

- Assumptions/dependencies: Stable connectivity; privacy compliance; model drift management

- Insurance and risk scoring from occupational movement patterns

- Sectors: Insurance, enterprise risk management

- Enabled by: Long-horizon pose time-series, action recognition over HPE; aggregated, anonymized analytics

- Tools/workflows: Premium adjustments based on ergonomic risk; intervention recommendations

- Assumptions/dependencies: Ethical frameworks to prevent discrimination; transparent opt-in; validated correlation with claims

- Education and skill training with pose-guided feedback

- Sectors: Edtech, vocational training

- Enabled by: 2D/3D HPE plus action feedback loops; video-based semi-supervised labeling for content creation

- Tools/workflows: Form-checking for dance, lab safety, trades; automatic grading rubrics from pose metrics

- Assumptions/dependencies: Diversity of body types/abilities; domain rubrics; instructor acceptance

Notes on cross-cutting dependencies

- Accuracy vs. use case: Consumer coaching tolerates higher error than clinical or safety-critical applications; choose appropriate metrics (PCK/OKS/mAP for 2D, MPJPE for 3D).

- Scene complexity: Top-down scales poorly with person count; bottom-up is preferred for crowds but may have lower peak accuracy.

- Occlusions and motion blur: Video-based temporal models and multiview/IMU fusion mitigate but require extra sensors/calibration.

- Compute and latency: Real-time demands edge accelerators; mobile scenarios benefit from MediaPipe-style graphs and efficient backbones.

- Privacy and ethics: Favor skeleton-only processing, edge inference, and strong governance; avoid identity linkage without consent.

- Robustness and generalization: Use robustness suites (COCO-C/MPII-C/OCHuman-C), adversarial testing, and demographic audits before deployment.

Glossary

- 2D-3D lifting: A process that maps 2D keypoint estimates into 3D joint coordinates using learned models. "An novel SemGCN for 2D-3D lifting."

- Associative embedding: A technique that learns per-pixel embeddings so detected keypoints can be grouped into individual persons. "introduced associative embedding to train neural networks for assigning keypoints to each person."

- Backbone network: The feature-extraction part of a deep model that supplies representations to task-specific heads. "the so-called backbone network."

- Bibliometrics: Quantitative analysis of scientific publications used to study research activity and trends. "we apply bibliometrics to retrieve scientific publications for analyzing the research trends in HPE."

- Bottom-up approaches: Multi-person pose estimation methods that detect all keypoints first and then group them into individuals. "either top-down approaches or bottom-up approaches:"

- Cascaded Pyramid Network (CPN): A pose-estimation architecture that aggregates multi-scale features in a cascaded fashion. "Cascaded Pyramid Network (CPN)"

- Convolutional Neural Networks (CNNs): Deep networks using convolutional layers, widely employed for visual feature extraction in HPE. "Convolutional Neural Networks (CNNs)"

- Distribution-aware coordinate representation of keypoints (DARK): A refinement method that improves coordinate accuracy by modeling the distribution within heatmaps. "the distribution-aware coordinate representation of keypoints (DARK)"

- Epipolar transformer: A module that leverages epipolar geometry to fuse multi-view information for pose estimation. "An epipolar transformer"

- Feature Pyramid Network (FPN): A multi-scale feature architecture for detection tasks often used to propose person boxes for top-down HPE. "FPN"

- Graph Clustering: A grouping strategy that clusters detected joints via graph methods to form person instances. "Graph Clustering for grouping"

- Graph Convolutional Networks (GCNs): Neural networks that operate on graph-structured data, often modeling skeletal joints and their relations. "Graph Convolutional Networks (GCNs)"

- Heatmap: A per-joint spatial probability map indicating the confidence of a keypoint at each pixel location. "the heatmap representation has become a prevalent solution representation in HPE."

- Hourglass network: An encoder–decoder architecture with symmetric downsampling and upsampling paths used to capture multi-scale features. "Hourglass Networks are used in many HPE studies as backbone networks."

- HRNet: A high-resolution network that maintains multi-scale representations in parallel to improve localization accuracy. "HRNet"

- Hungarian algo.: An assignment algorithm used to associate detected parts or keypoints with person instances. "Hungarian algo."

- Long short-term memory (LSTM): A recurrent neural network module that models temporal dependencies in pose sequences. "long short-term memory (LSTM)"

- Mean Average Precision (mAP): The mean of average precision scores computed across multiple OKS thresholds for multi-person pose evaluation. "The mean AP (mAP) is the mean of AP over ten OKS thresholds"

- Mean Per Joint Position Error (MPJPE): The average Euclidean distance between predicted and ground-truth 3D joint positions. "Mean Per Joint Position Error (MPJPE) is currently a popular metric in 3D HPE"

- Object Keypoint Similarity (OKS): A similarity measure between predicted and ground-truth keypoints that accounts for object scale and keypoint uncertainty. "Object Keypoint Similarity (OKS)"

- Part Affinity Fields: Vector fields that encode limb associations between detected keypoints to enable grouping into people. "Part Affinity \ Fields"

- Percentage of Correct Keypoints (PCK): The fraction of predicted keypoints that fall within a specified normalized distance of ground-truth locations. "Percentage of Correct Keypoints (PCK)"

- Prediction head: The task-specific part of a network that outputs pose predictions from backbone features. "the prediction head"

- Recurrent Neural Networks (RNNs): Neural networks designed for sequential data that incorporate temporal context, used in video-based HPE. "Recurrent Neural Networks (RNNs)"

- Top-down approaches: Multi-person pose methods that first detect person bounding boxes, then run a single-person pose estimator on each. "Top-down approaches apply a person detector"

- Transformer: An attention-based model used to capture long-range dependencies and enhance pose regression or decoding. "the transformer that adopts the attention mechanism"

- Triangulation: A multi-view 3D reconstruction technique that infers 3D joint positions from multiple 2D observations. "An end-to-end DNN triangulation method"

- Two-stage methods: Monocular 3D HPE pipelines that first estimate 2D poses and then lift them to 3D. "two-stage methods entail using off-the-shelf 2D predictors"

- Upsampling: A feature map enlargement operation used to produce high-resolution heatmaps for precise localization. "the operation of upsampling is generally used"

- Vision transformers: Transformer architectures adapted for images, used as backbones or decoders in pose estimation. "utilized vision transformers to implement regression-based HPE"

- Voxel space: A discretized 3D grid representation used for volumetric heatmaps of joint locations. "predicted 3D heatmaps in voxel space"

Collections

Sign up for free to add this paper to one or more collections.