- The paper introduces TsT-GAN, a novel framework that leverages Transformer architecture to synthesize realistic time-series data.

- The methodology incorporates a bidirectional generator and Transformer encoder to overcome error accumulation in autoregressive models.

- Experimental results demonstrate that TsT-GAN outperforms five state-of-the-art models in predictive accuracy across diverse datasets such as Stocks and Energy.

Abstract

The paper "Time-series Transformer Generative Adversarial Networks" (2205.11164) addresses the challenges of generating synthetic time-series data when real-world datasets are limited due to privacy regulations or insufficient data availability. The authors introduce TsT-GAN, leveraging Transformers' capabilities to capture the necessary data distributions for effective time-series synthesis. Through comprehensive experimentation against existing methodologies, TsT-GAN demonstrated superior performance, marking advancements particularly in predictive modeling.

Introduction

Time-series data is vital across various fields, yet data constraints pose significant hurdles for analytic models. In domains such as medicine, privacy concerns and restricted access to data necessitate the generation of synthetic datasets. Similarly, international regulations like GDPR have reduced the availability of data related to human-internet interactions. Synthetic data generation serves as a tool to circumvent these limitations, permitting continued model development even when data is scarce.

The framework proposed in the paper—TsT-GAN—addresses two critical objectives for synthetic data generation: accurately capturing both stepwise conditional distributions and joint distributions. Autoregressive models usually struggle with error accumulation, while TsT-GAN capitalizes on the Transformer architecture to mitigate these issues, achieving higher predictive performance than five state-of-the-art models across various datasets.

Methodology

The generator aims to approximate the true generating distribution of multivariate time-series data while respecting both global and local autoregressive constraints. These objectives are quantified with distance metrics to ensure the synthetic data maintains utility for downstream models. The framework introduces a bidirectional approach while aligning with autoregressive conditional distributions, allowing it to accommodate diverse data types and complexities.

Model Architecture

Embedder–Predictor Network:

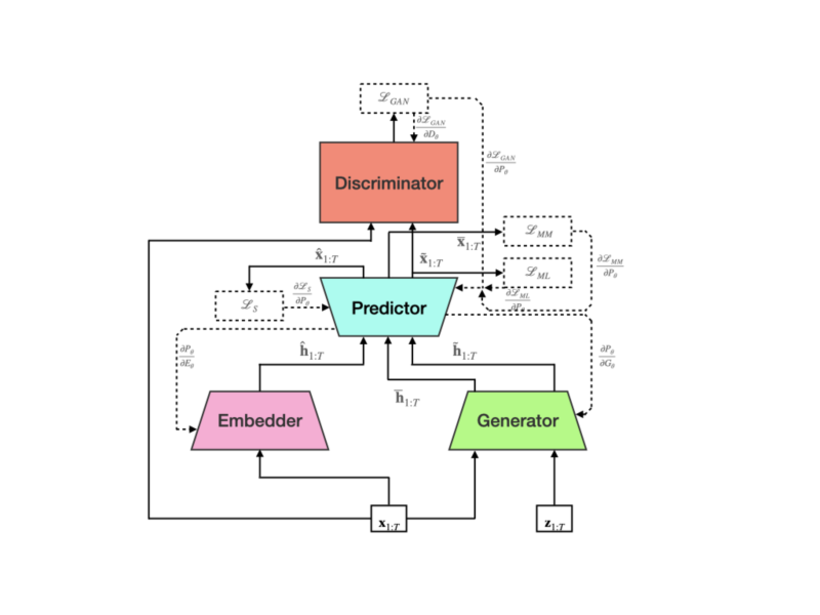

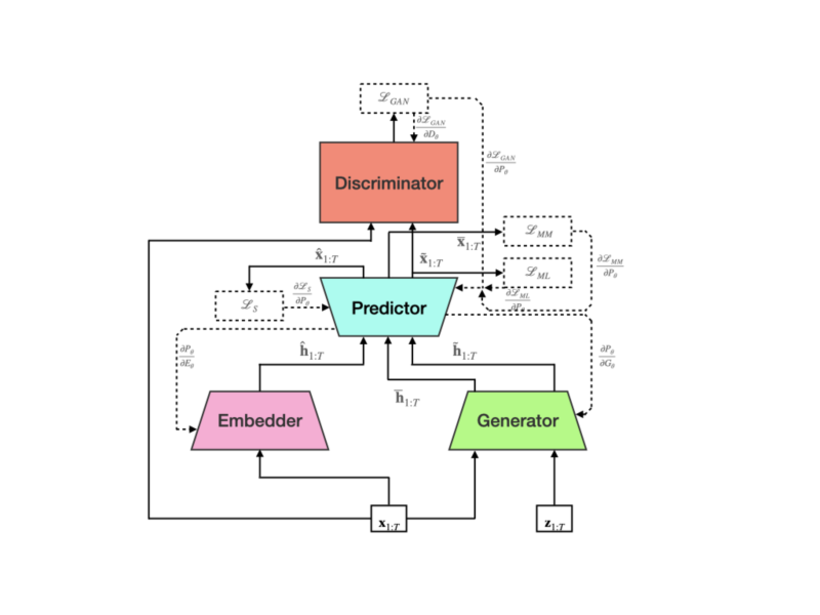

Figure 1: Block diagram of TsT-GAN showing data and gradient flows. Gradients that propagate back to the generator will pass through the predictor network, but will not be allowed to change the parameters of the predictor.

The embedder-predictor network utilizes a Transformer architecture with a separate neural network for predictor functionality, enabling next-step predictions for entire sequences. This network emphasizes learning sequences' conditional distribution essential for accurate time-series data synthesis.

Generator and Discriminator:

The bidirectional generator model utilizes random vectors to produce latent embeddings, optimized through LS-GAN adversarial losses. The generator's parameters are updated separately from those of the predictor network, enhancing the learning process. The discriminator, modeled as a Transformer encoder, completes global sequence classification, contributing to a holistic synthesis process rather than error-prone stepwise evaluations.

Experiments

Evaluation Methodology

The paper evaluates TsT-GAN against baselines like TimeGAN and C-RNN-GAN, utilizing predictive and discriminative scores alongside t-SNE visualization to gauge performance. TsT-GAN's ability to consistently generate accurate synthetic datasets underscores its transformative impact on time-series data synthesis.

Datasets and Results

Across diverse datasets—including Sines, Stocks, and Chickenpox—TsT-GAN exhibited remarkable performance, significantly outperforming existing models in predictive accuracy, especially in complex datasets like Energy and Air Quality.

Visualisation

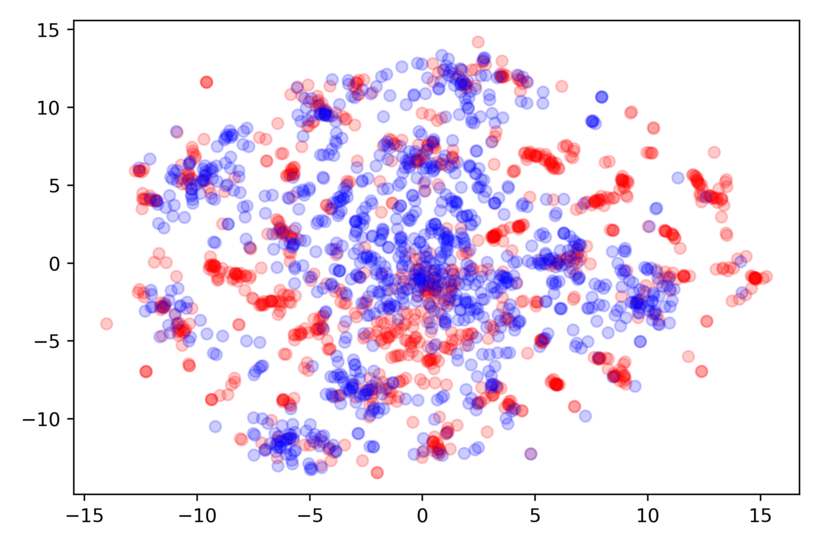

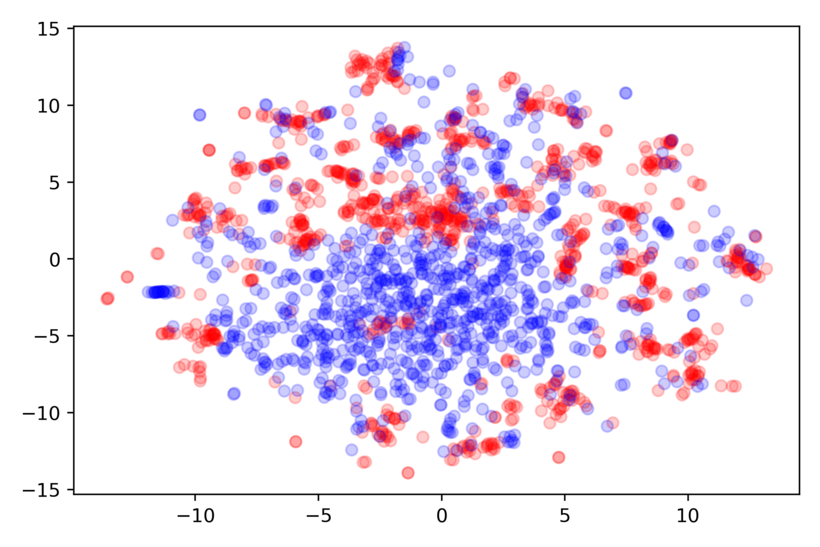

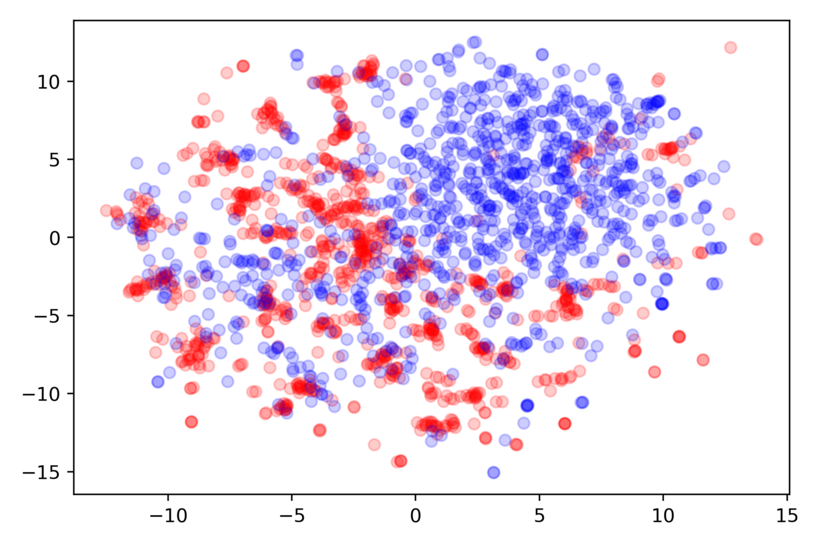

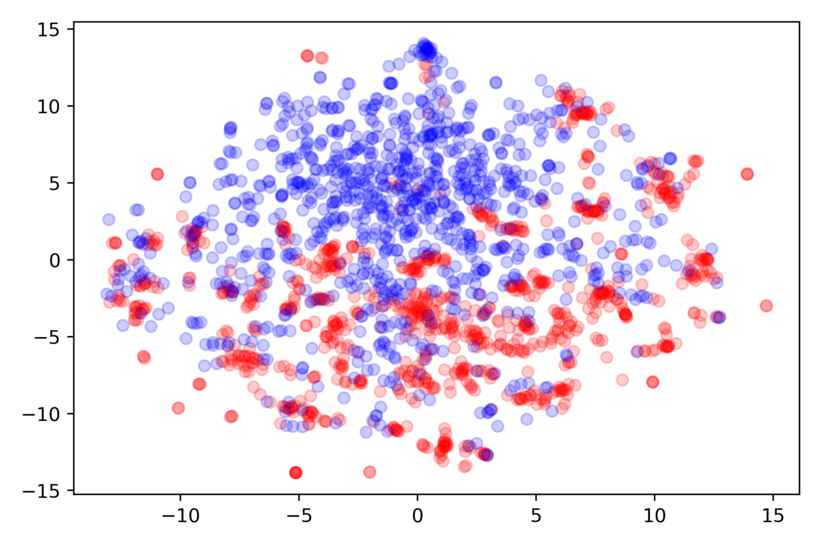

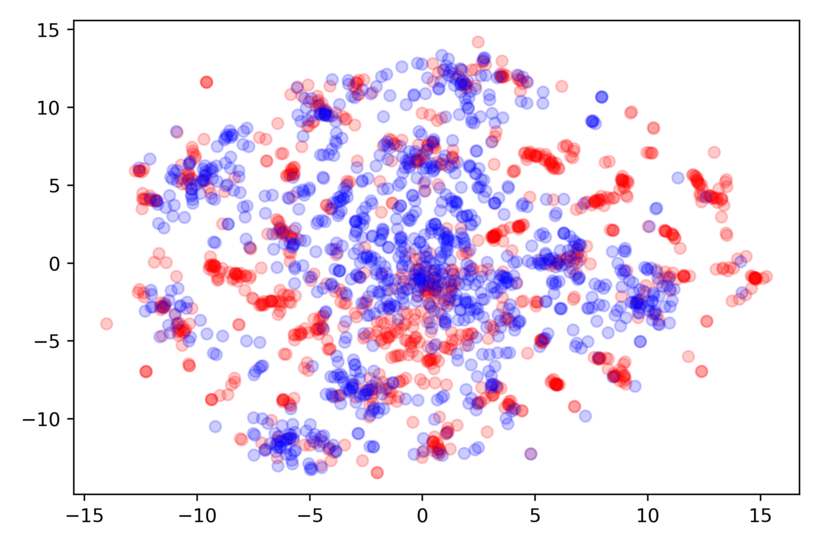

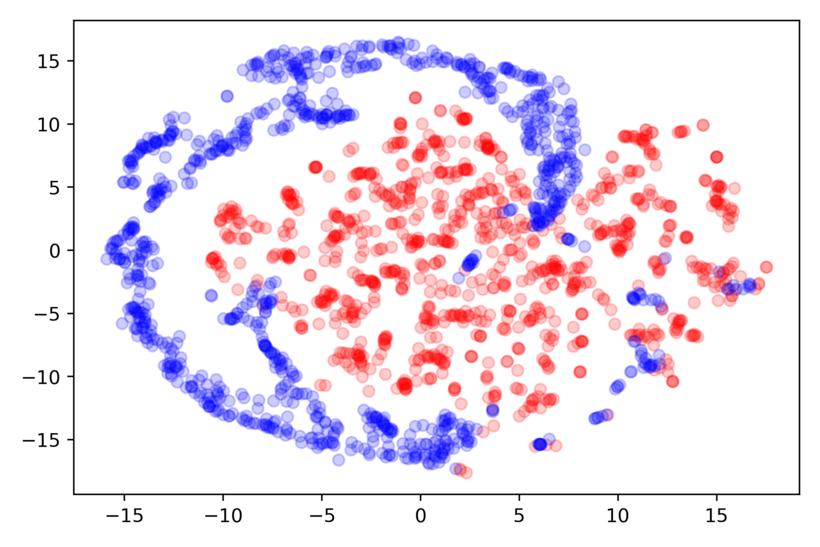

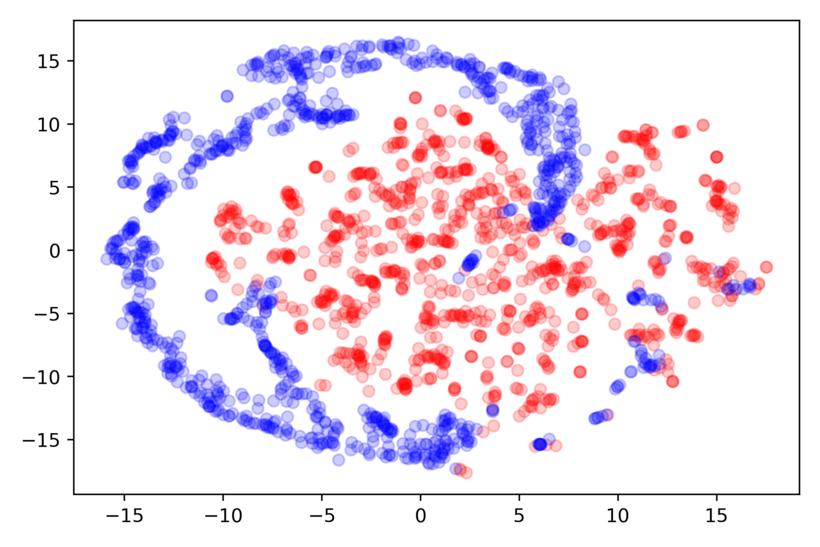

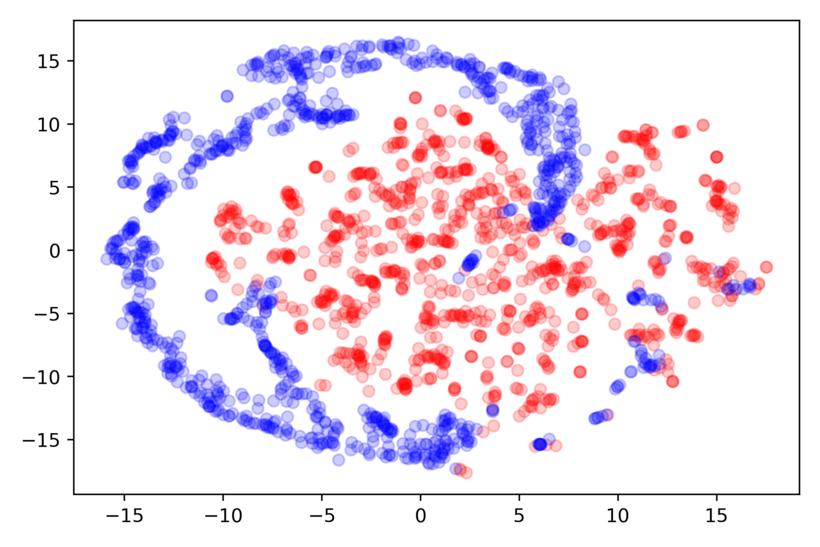

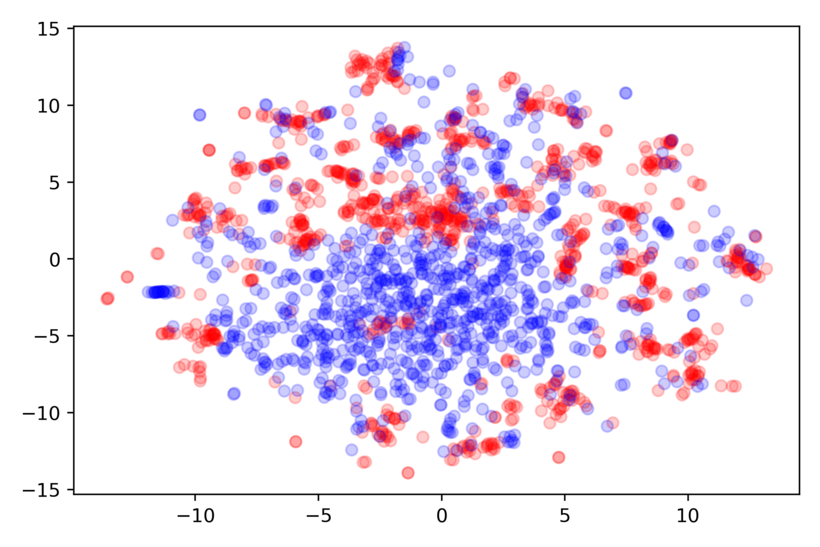

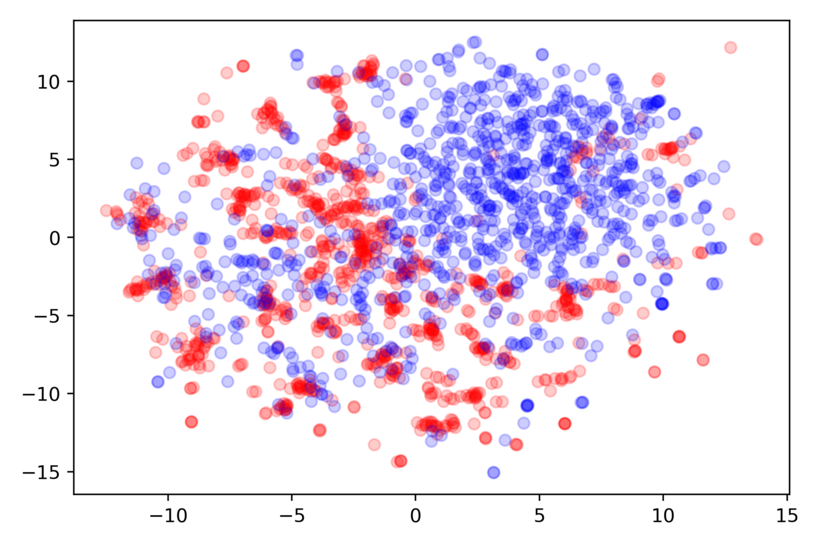

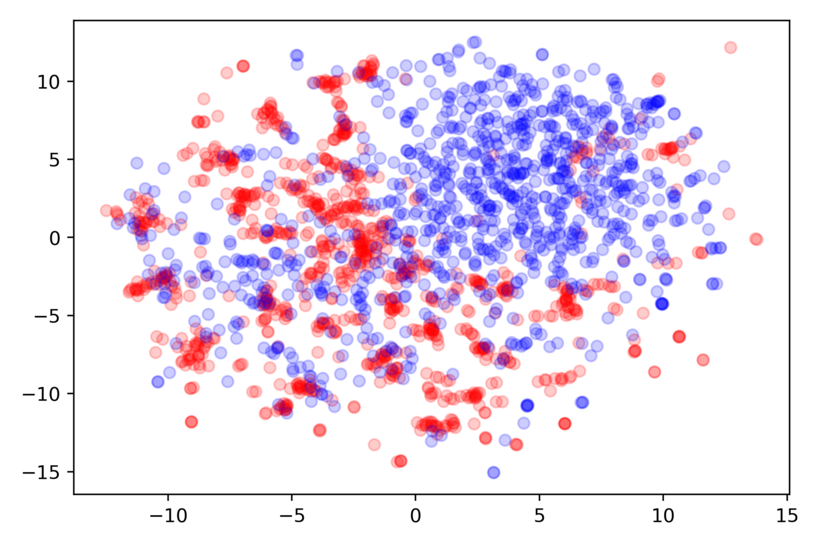

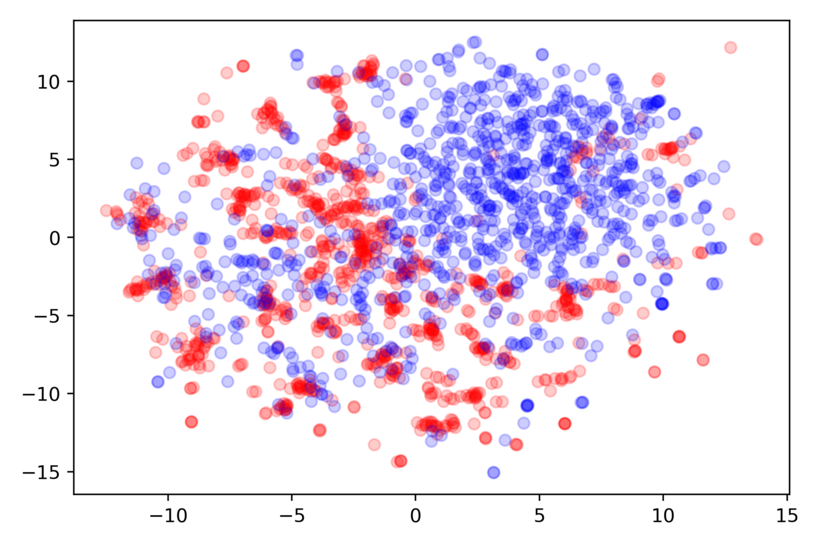

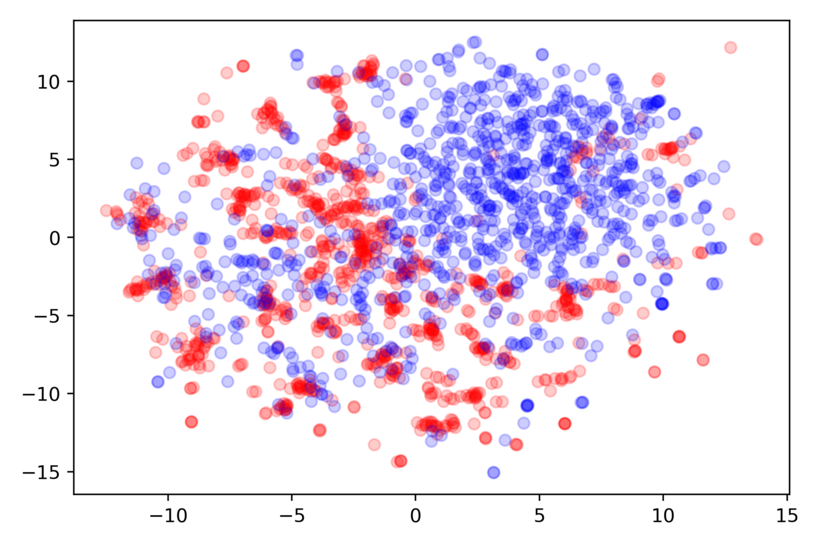

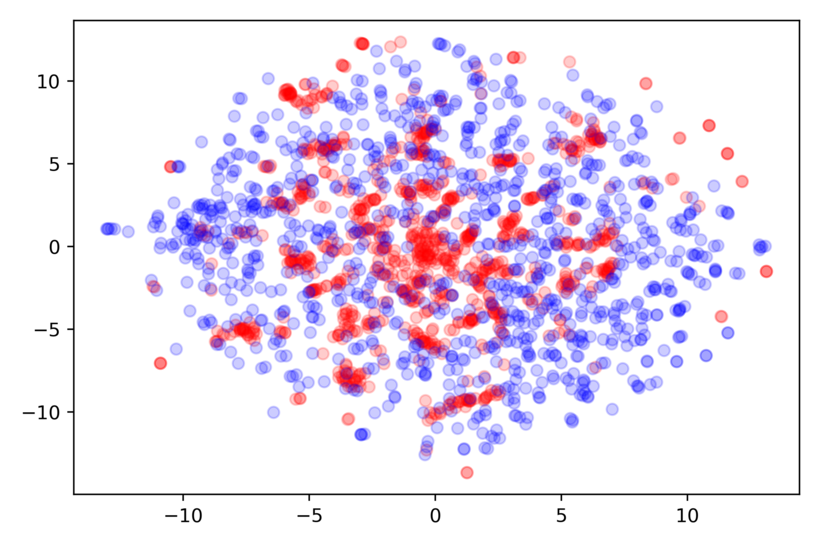

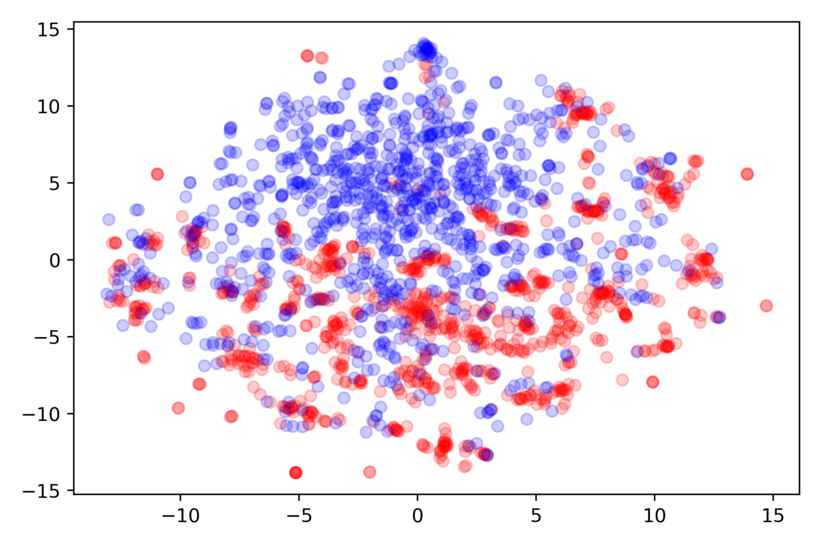

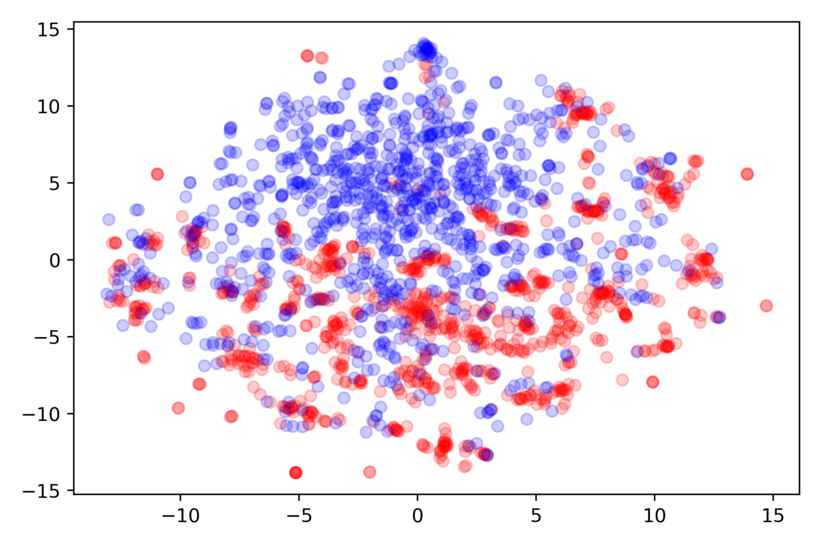

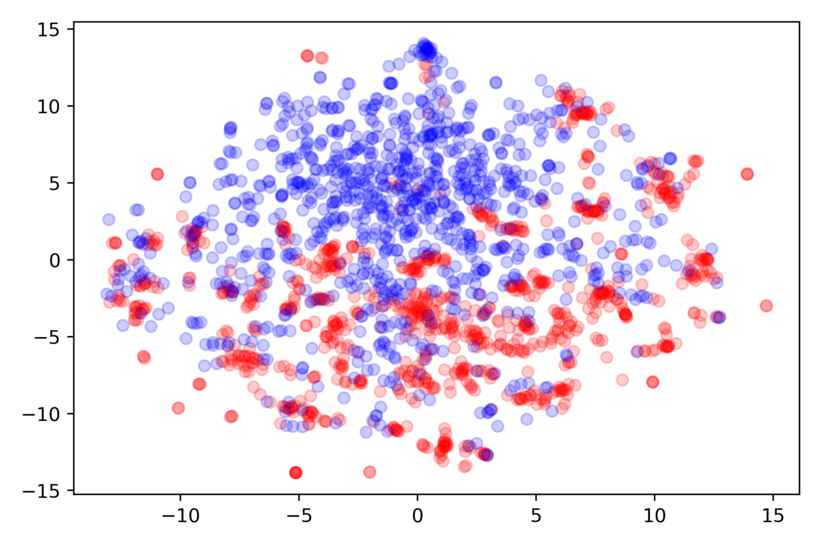

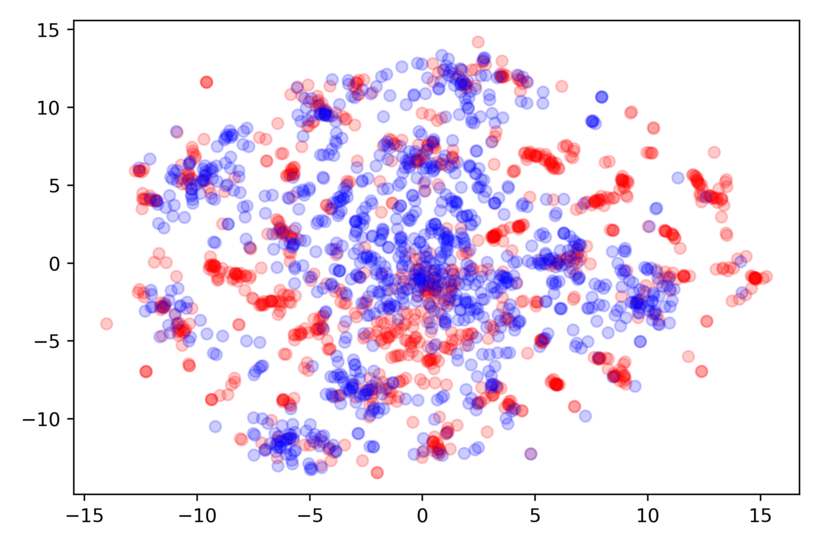

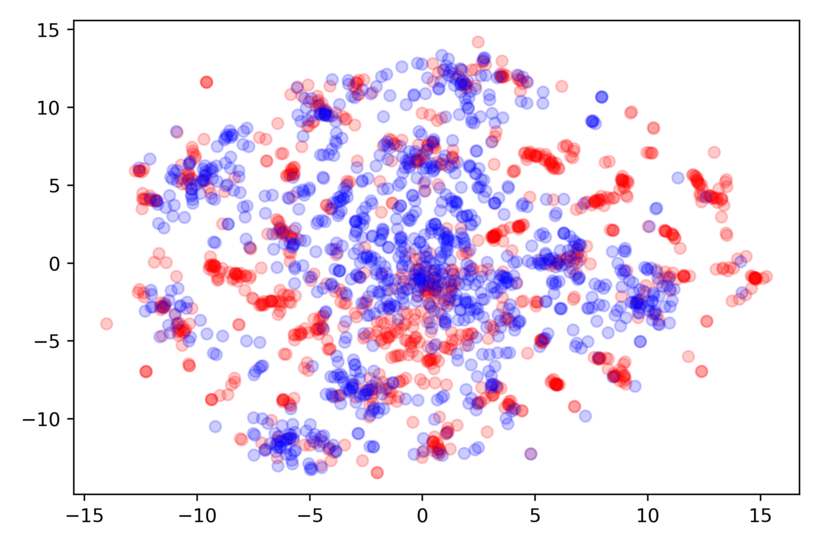

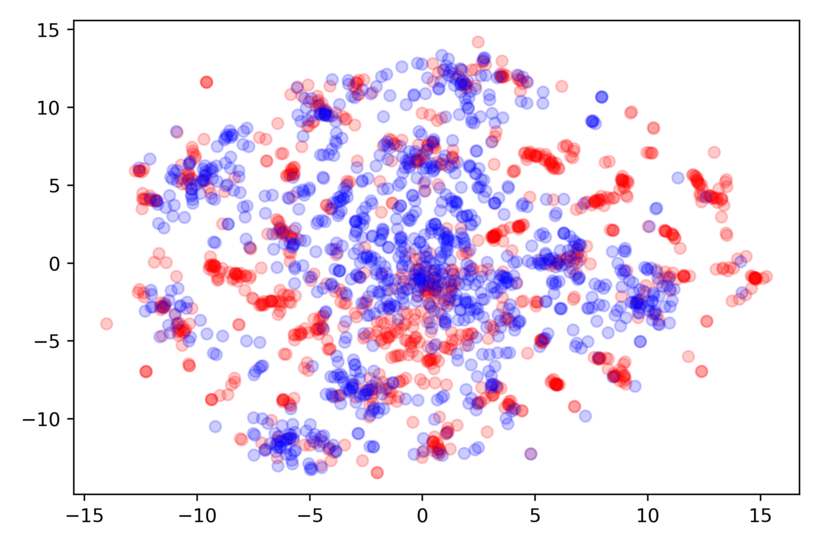

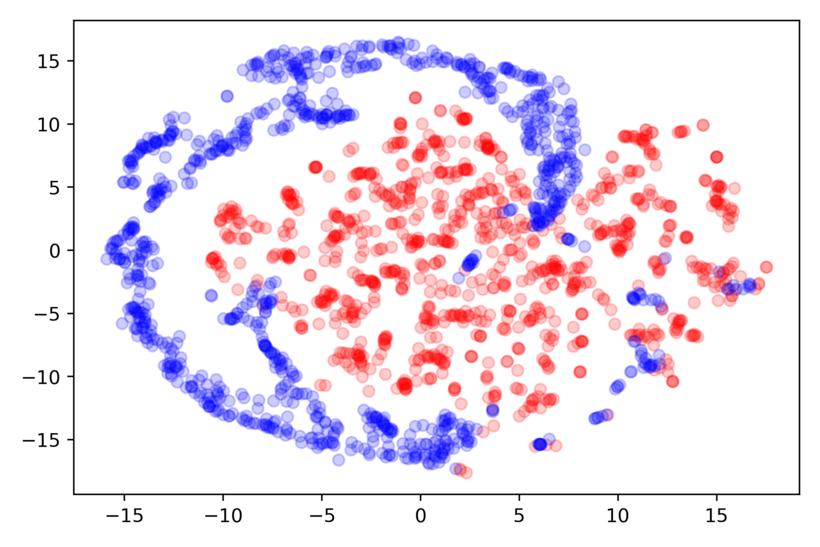

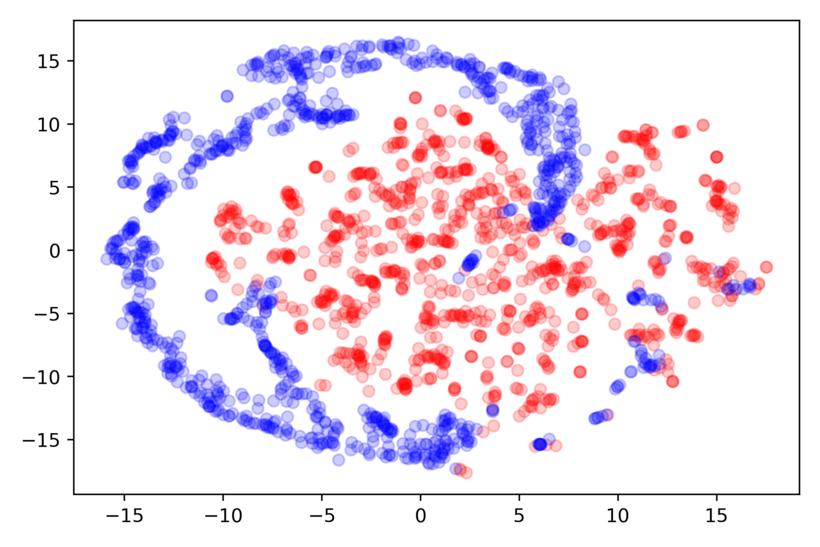

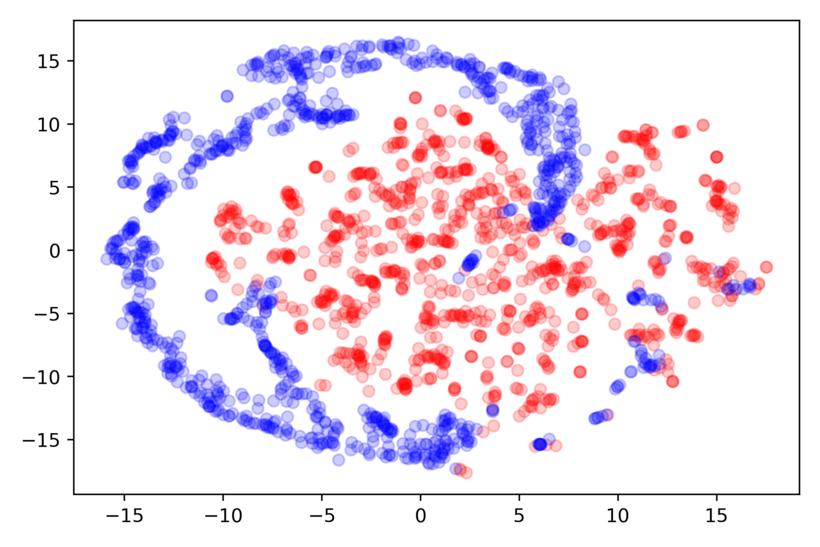

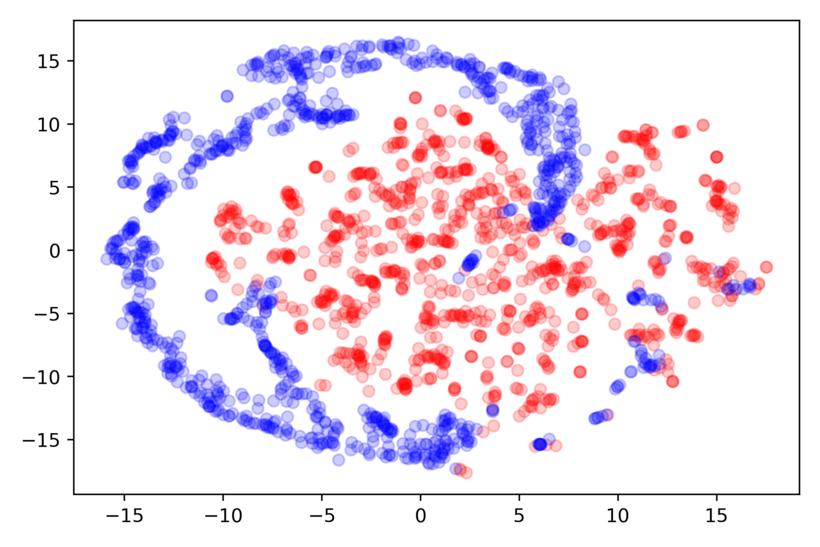

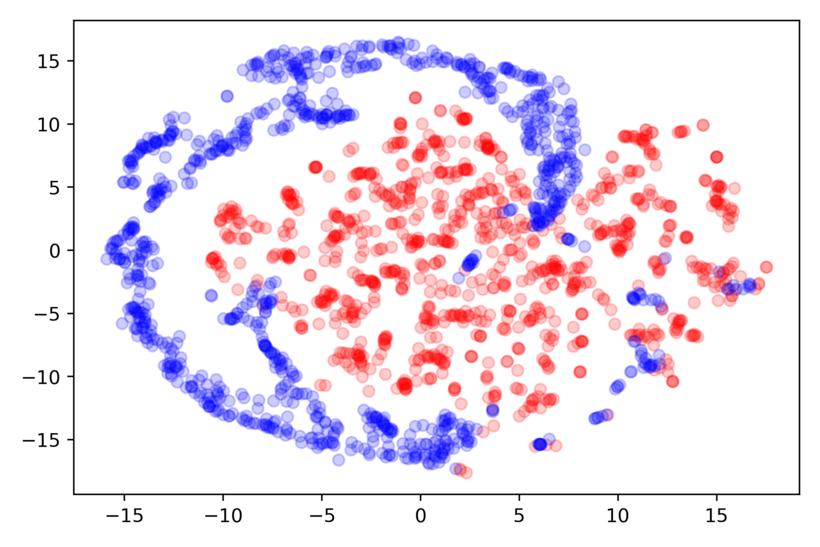

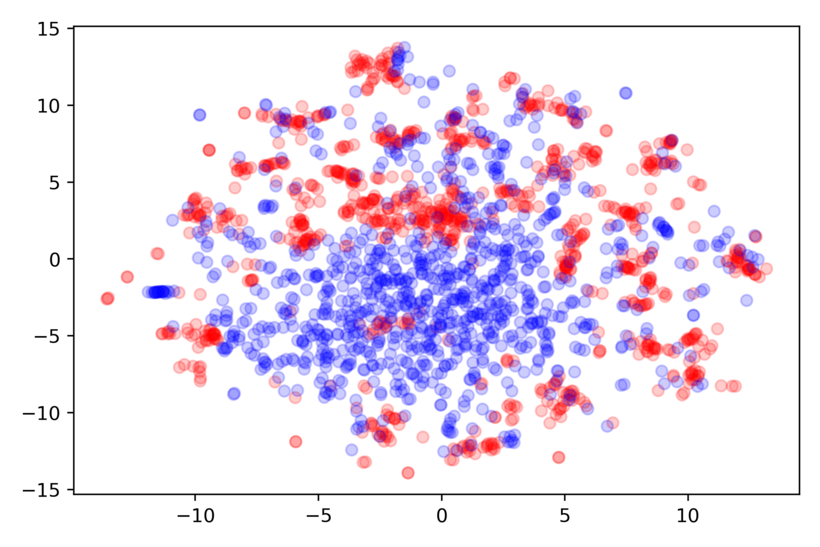

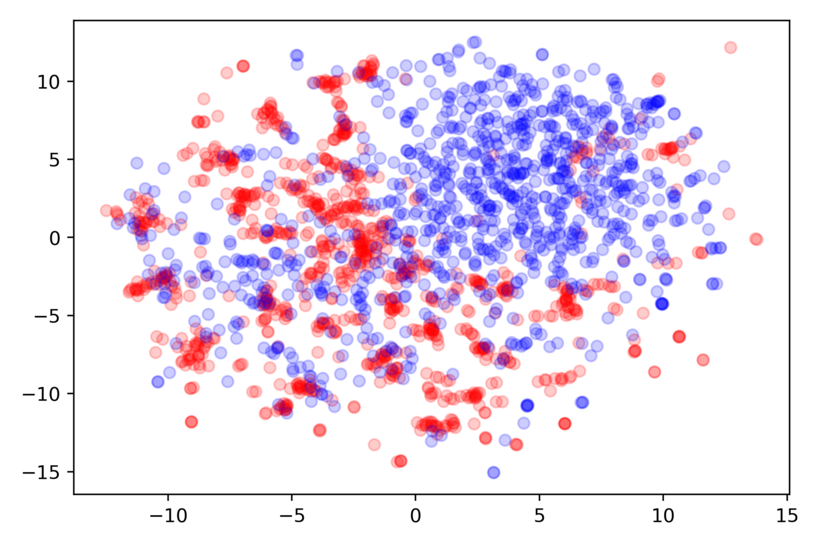

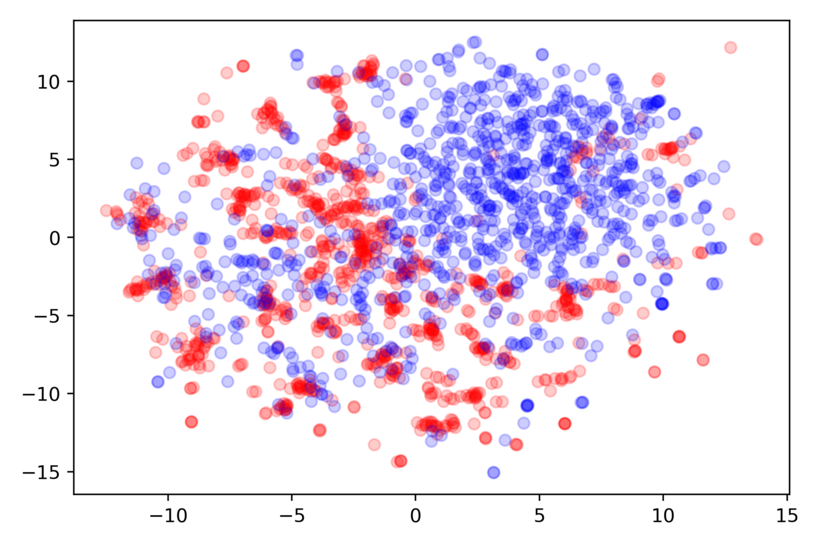

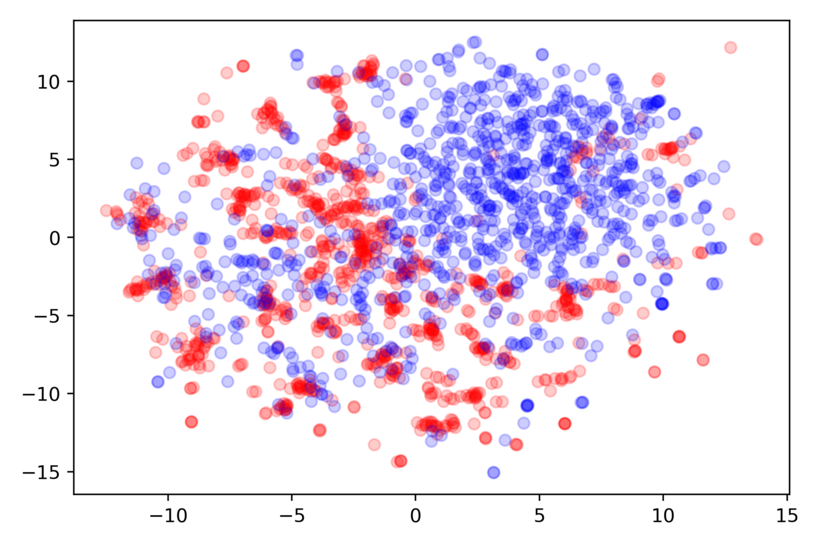

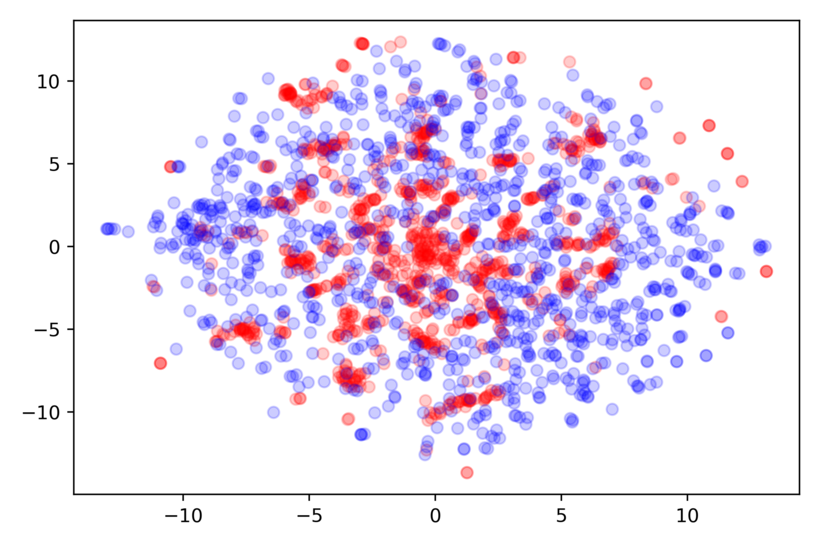

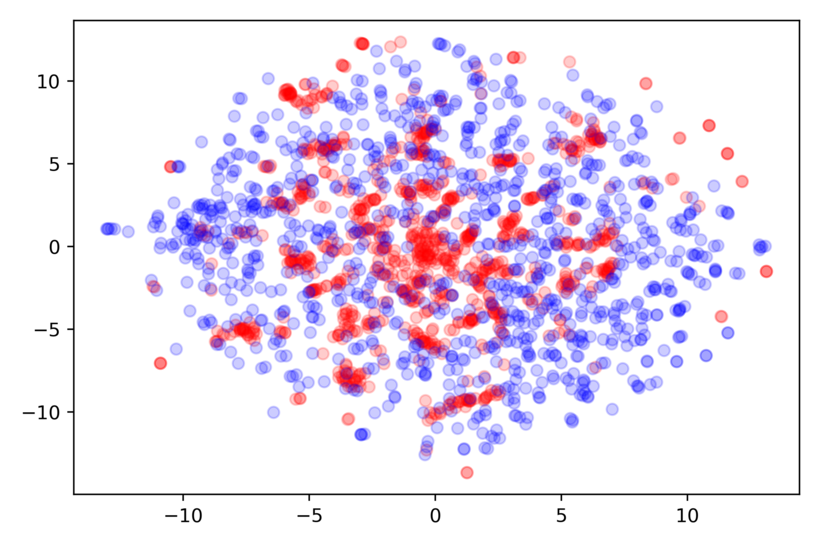

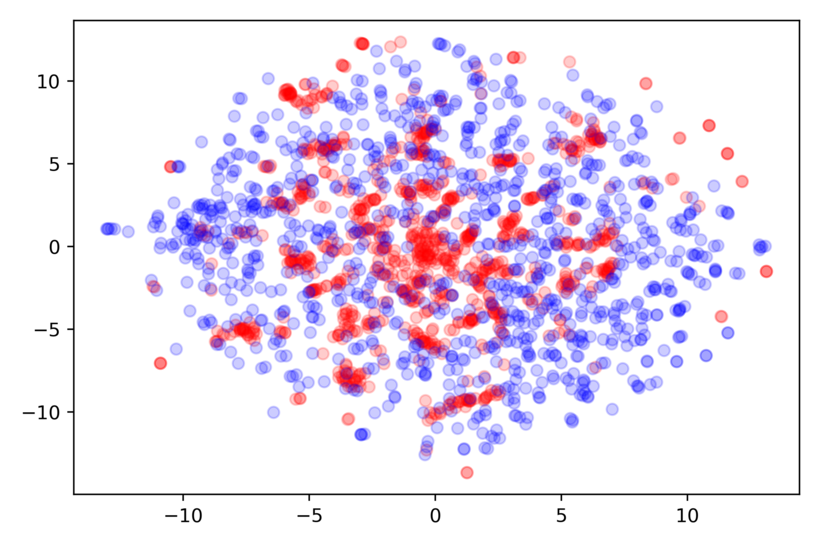

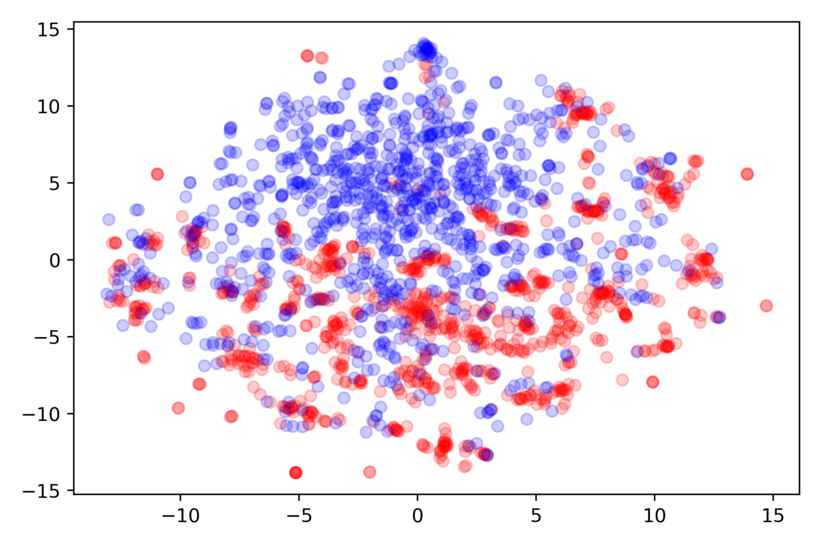

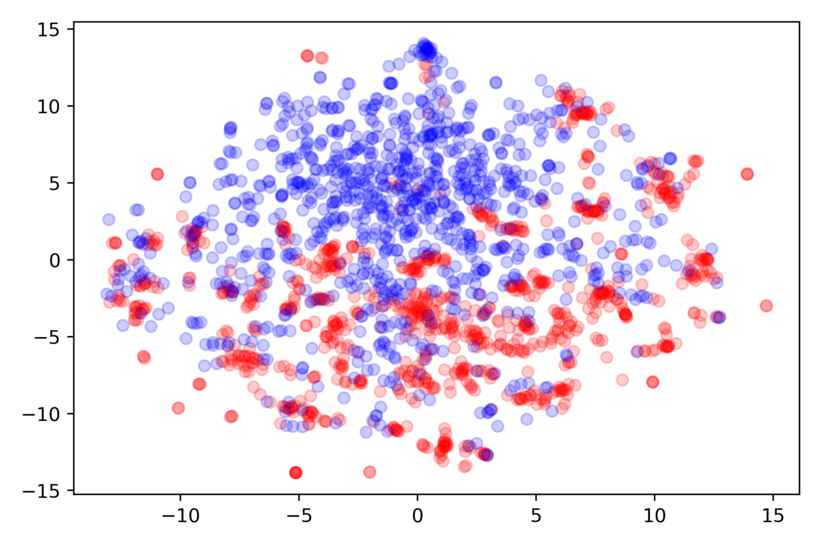

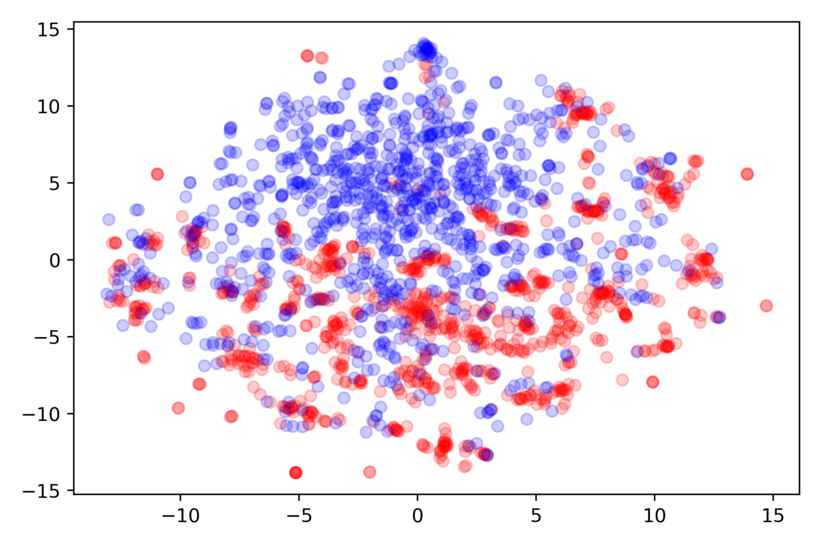

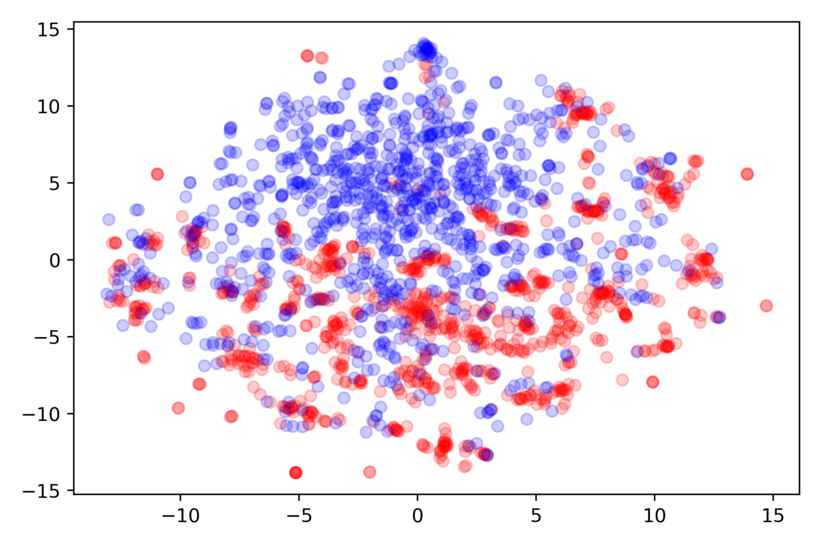

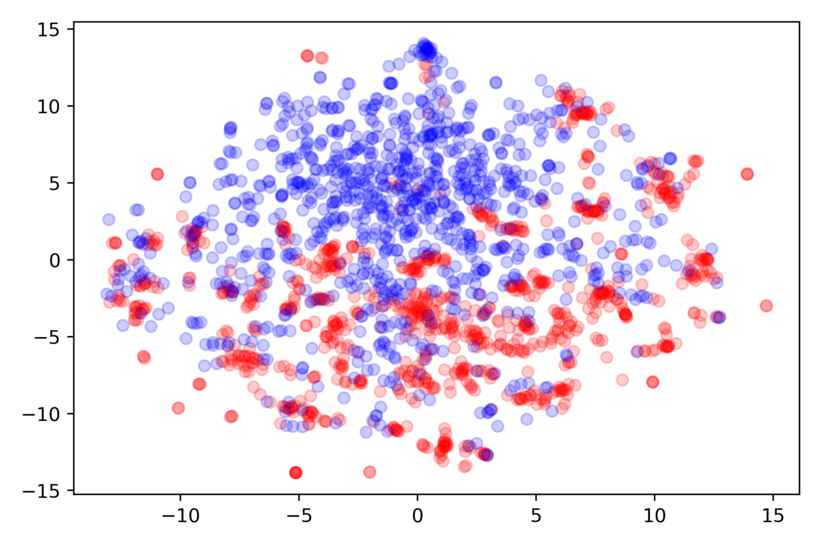

Figure 2: TsT-GAN.

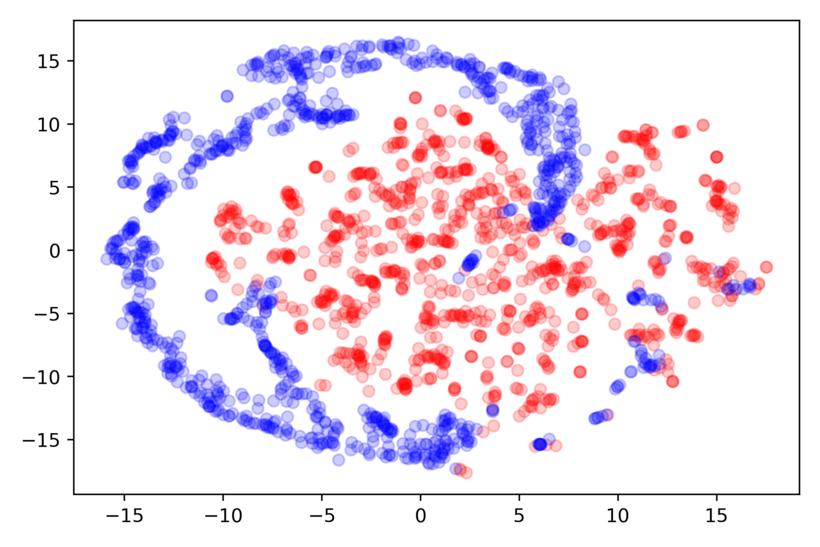

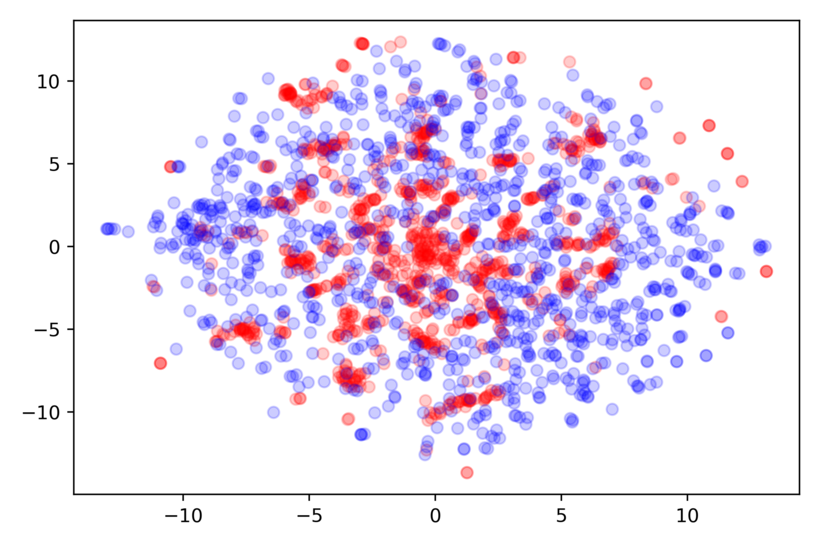

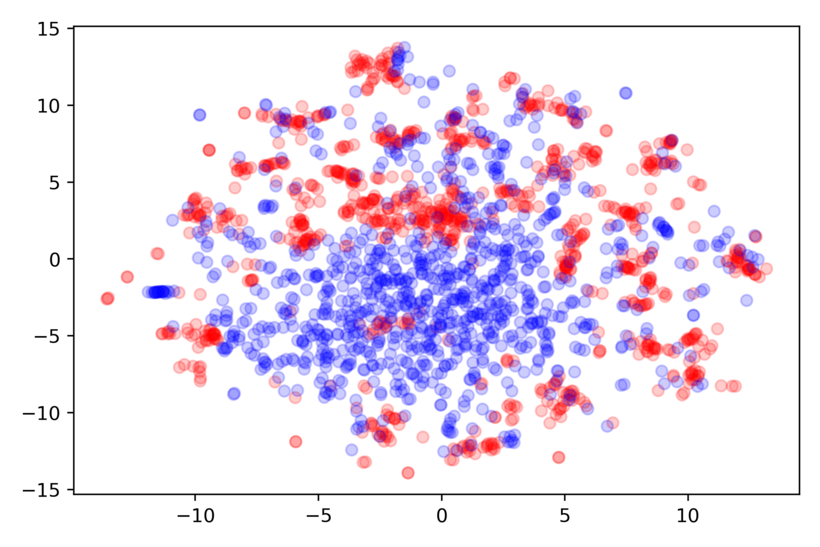

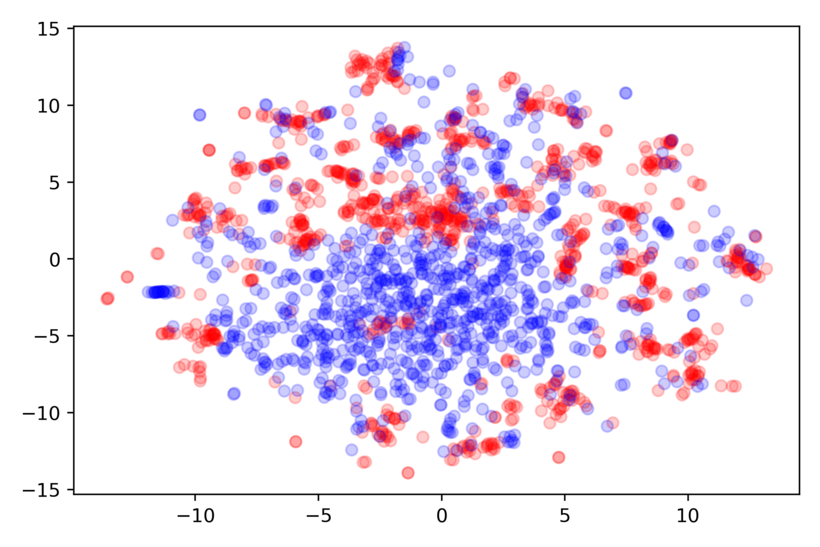

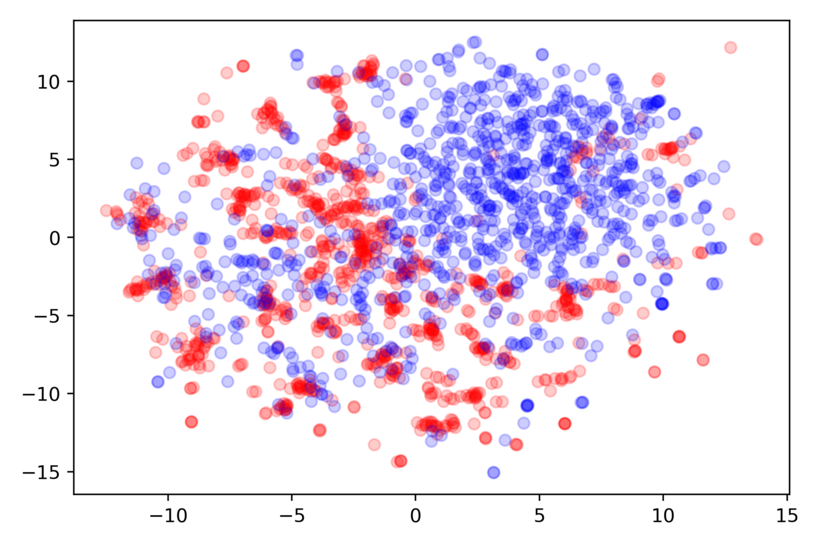

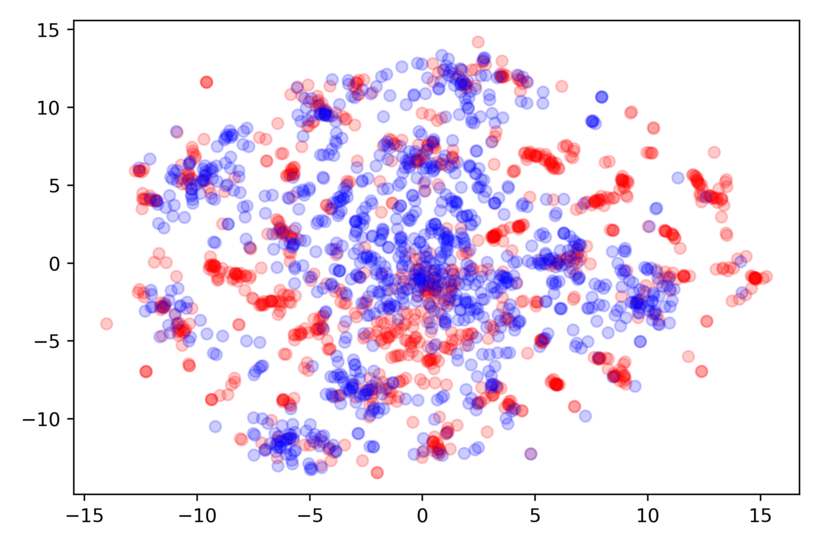

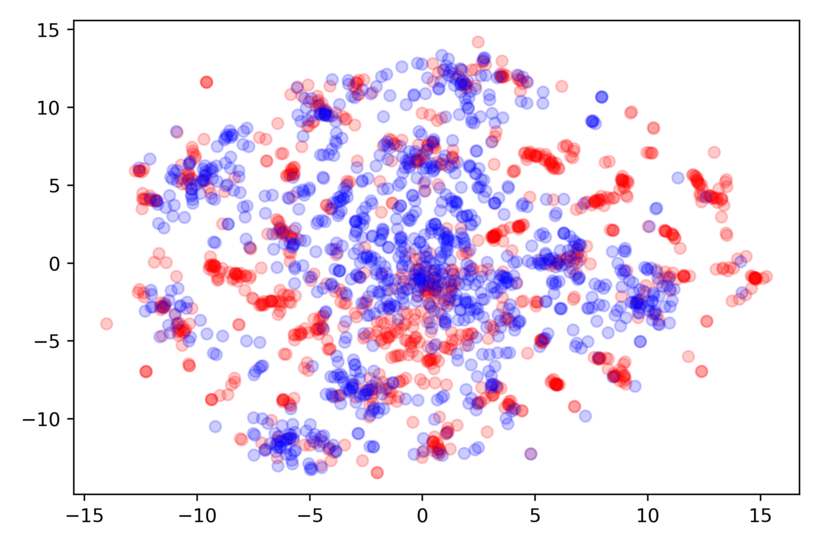

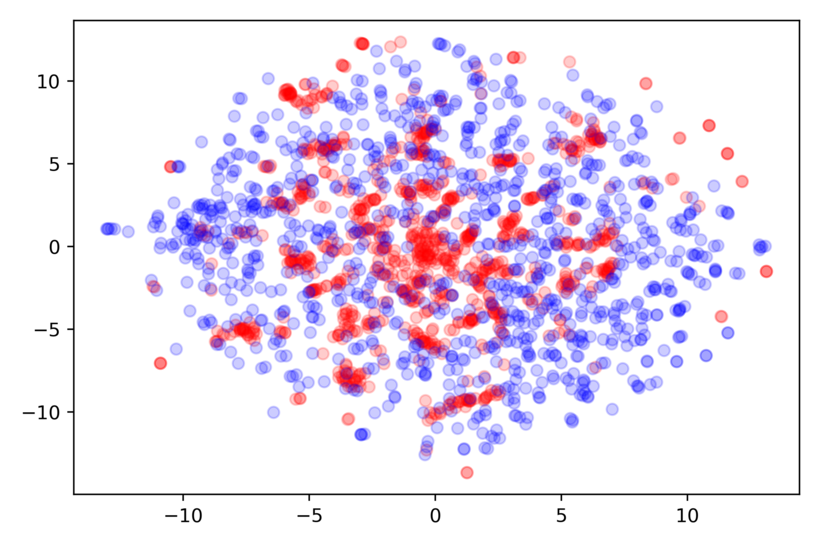

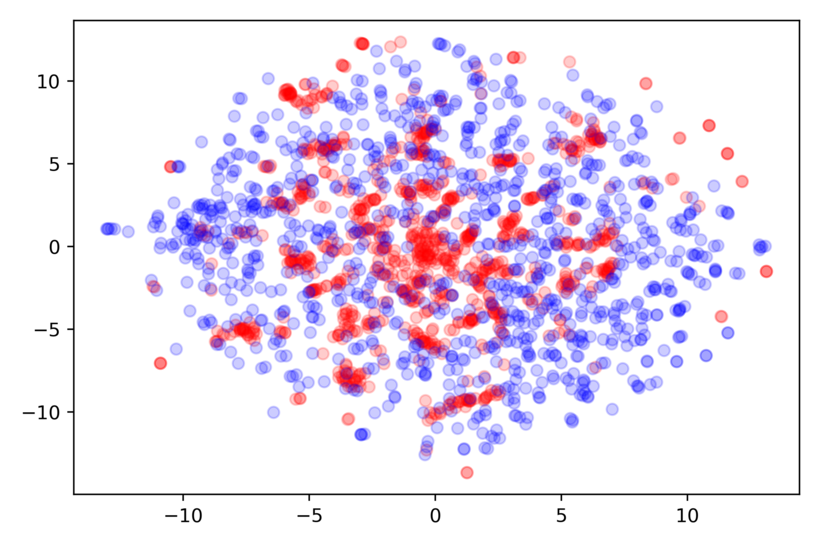

Figure 3: TsT-GAN.

TsT-GAN's synthesized data closely resembled real data distributions in t-SNE plots, demonstrating effective learning of inherent time-series dynamics. Figure 2 and Figure 3 support the claim that TsT-GAN can capture essential distribution features for various datasets.

Conclusion

The introduction of TsT-GAN represents a notable advancement in time-series synthetic data generation, addressing fundamental issues in data distribution capture and modeling precision. While computational challenges persist due to the Transformer architecture, the gains in data utility provide strong justification for the model's deployment. Future expansions may explore enhanced unified frameworks for moment matching, fostering even stronger alignment between real and synthetic data distributions.