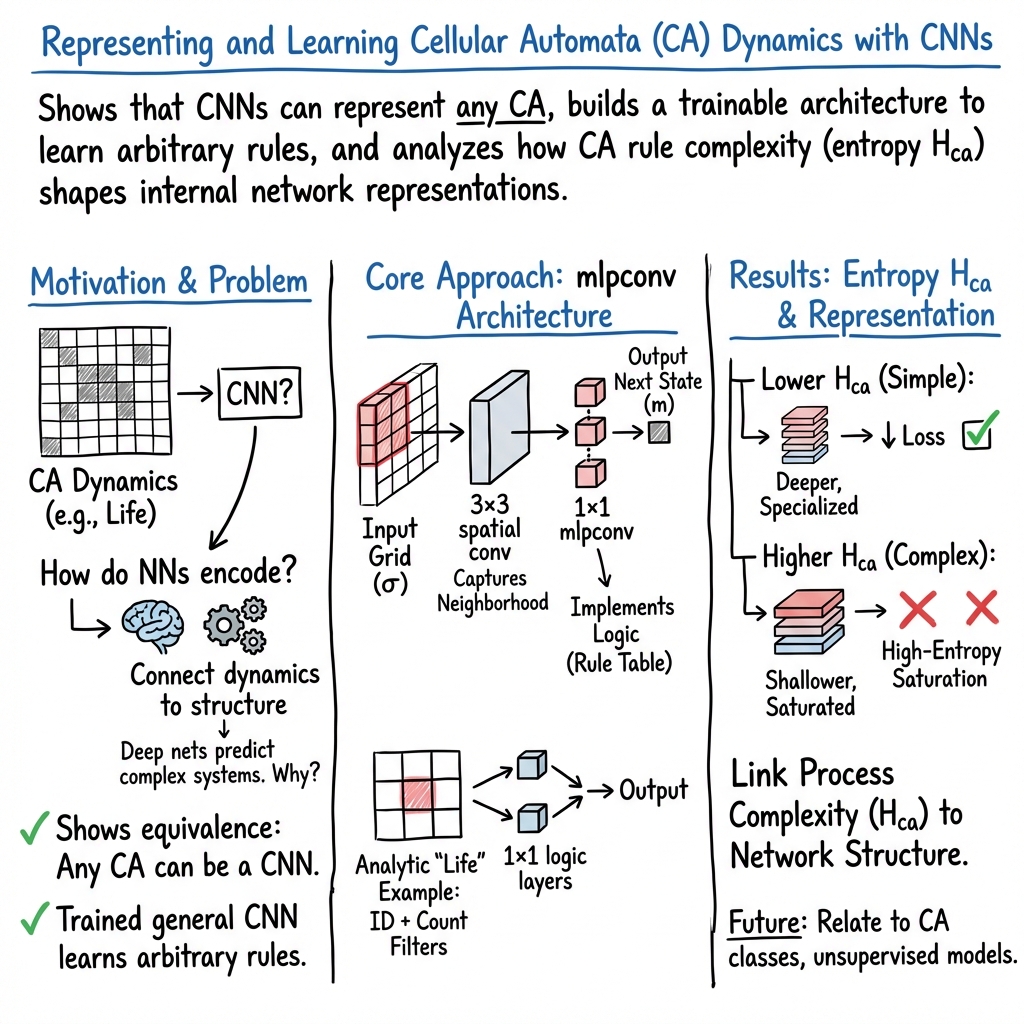

Cellular automata as convolutional neural networks

Abstract: Deep learning techniques have recently demonstrated broad success in predicting complex dynamical systems ranging from turbulence to human speech, motivating broader questions about how neural networks encode and represent dynamical rules. We explore this problem in the context of cellular automata (CA), simple dynamical systems that are intrinsically discrete and thus difficult to analyze using standard tools from dynamical systems theory. We show that any CA may readily be represented using a convolutional neural network with a network-in-network architecture. This motivates our development of a general convolutional multilayer perceptron architecture, which we find can learn the dynamical rules for arbitrary CA when given videos of the CA as training data. In the limit of large network widths, we find that training dynamics are nearly identical across replicates, and that common patterns emerge in the structure of networks trained on different CA rulesets. We train ensembles of networks on randomly-sampled CA, and we probe how the trained networks internally represent the CA rules using an information-theoretic technique based on distributions of layer activation patterns. We find that CA with simpler rule tables produce trained networks with hierarchical structure and layer specialization, while more complex CA produce shallower representations---illustrating how the underlying complexity of the CA's rules influences the specificity of these internal representations. Our results suggest how the entropy of a physical process can affect its representation when learned by neural networks.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.